1205-0040_SupportPartB_FINAL_edited_3-23-2015

1205-0040_SupportPartB_FINAL_edited_3-23-2015.doc

Senior Community Service Employment Program Performance Measurement System

OMB: 1205-0040

THE SENIOR COMMUNITY SERVICE EMPLOYMENT PROGRAM

(SCSEP): OMB 1205-0040

Supporting Statements, Part B

Part B

COLLECTIONS OF INFORMATION EMPLOYING STATISTICAL METHODS

Using the American Customer Satisfaction Index to Measure Customer Satisfaction in the Senior Community Service Employment Program

1. Describe (including a numerical estimate) the potential respondent universe and any sampling or other respondent selection method to be used. Data on the number of entities (e.g. establishments, State and local governmental units, households, or persons) in the universe and the corresponding sample are to be provided in tabular form. The tabulation must also include expected response rates for the collection as a whole. If the collection has been conducted before, provide the actual response rate achieved.

Title V of the Older Americans Act requires that Senior Community Service Employment Program (SCSEP) conduct customer satisfaction surveys for each of its three customer groups: participants, host agencies, and employers. DOL’s Employment and Training Administration (ETA) is using the American Customer Satisfaction Index (ACSI) to meet the customer satisfaction measurement needs of several ETA programs, including SCSEP. SCSEP has been conducting these surveys nationwide since 2004.

The measure adopted by SCSEP to satisfy the statutory requirement is the ACSI. The survey approach employed allows the program flexibility and, at the same time, captures common customer satisfaction information that can be aggregated and compared among national and state grantees. The measure is created with a set of three core questions that form a customer satisfaction index. The index is created by combining scores from three specific questions that address different dimensions of customers' experience. Additional questions that do not affect the assessment of grantee performance are included to allow grantees to effectively manage the program.

The ACSI is a widely used customer satisfaction measurement approach. It is used extensively in the business communities in Europe and the United States, including more than 200 companies in 44 industries. In addition, over 100 Federal government agencies have used the ACSI to measure citizen satisfaction with more than 200 services and programs.

SCSEP currently funds 72 grantees, 16 national grantees operating in 49 states and territories, and 56 state or territorial grantees:

NATIONAL GRANTEES |

Associates for Training & Development |

AARP Foundation Programs |

Asociación Nacional Para Personas Mayores de Edad |

Easter Seals |

Experience Works |

Goodwill Industries International |

Mature Services |

National Able Network |

National Asian Pacific Center on Aging [General Grant] |

National Asian Pacific Center on Aging [Set-aside Grant] |

National Caucus and Center on Black Aged |

National Council on the Aging |

National Indian Council on the Aging [Set-aside Grant] |

National Urban League |

SER Jobs for Progress National |

Senior Service America |

STATE GRANTEES |

Alabama Department of Senior Services |

Alaska Department of Labor and Workforce Development |

Arizona Department of Economic Security |

Arkansas Department of Human Services, Division of Aging and Adult Services |

California Department of Aging |

Colorado Department of Human Services, Aging and Adult Services |

Connecticut Department of Social Services |

Delaware Division of Services for Aging and Adults with Physical Disabilities |

District of Columbia Department of Employment Services |

Florida Department of Elder Affairs |

Georgia Department of Human Services |

Hawaii Department of Labor And Industrial Relations |

Idaho Commission on Aging |

Illinois Department on Aging |

Indiana Family and Social Services Administration |

Iowa Department on Aging |

Kansas Department of Commerce, Business Development Division |

Kentucky Division of Aging Services |

Louisiana Governor's Office of Elderly Affairs |

Maine Office of Elder Services |

Maryland Department of Aging |

Massachusetts Executive Office of Elder Affairs |

Michigan Office of Services to the Aging |

Minnesota Department of Employment and Economic Development |

Mississippi Department of Employment Security |

Missouri Department of Health and Senior Services |

Montana Department of Labor and Industry |

Nebraska State Unit on Aging |

Nevada Aging and Disabilities Services Division |

New Hampshire Department of Resources and Economic Development |

New Jersey Department of Labor and Workforce Development |

New Mexico Aging and Long-Term Services Department |

New York State Office for the Aging |

North Carolina Department 0f Human Services, Division of Aging And Adult |

North Dakota Department of Human Services |

Ohio Department of Aging |

Oklahoma Employment Security Commission |

Oregon Department of Human Services, Aging and People with Disabilities |

Pennsylvania Department of Aging and Long Term Living |

Puerto Rico Department of Labor |

Rhode Island Department of Elderly Affairs |

South Carolina Lt. Governor's Office on Aging |

South Dakota Department of Labor and Regulation |

Tennessee Department of Labor And Workforce Development |

Texas Workforce Commission |

Utah Department of Human Services |

Vermont agency of Human Services |

Virginia Department for Aging and Rehabilitative Services |

Washington Department of Social and Health Services, State Unit on Aging |

West Virginia Bureau of Senior Services |

Wisconsin Department of Health Services, Bureau of Aging and Disability Resources |

Wyoming Department of Workforce Services |

American Samoa Territorial Administration on Aging |

Guam Department of Labor |

Northern Mariana Islands, Office on Aging |

Virgin Islands Department of Labor |

A. Participant and Host Agency Surveys

1. Who is Surveyed

Participants - All SCSEP participants who are active at the time of the survey or have been active in the previous 12 months are eligible to be chosen for inclusion in the random sample of records. In PY 2014, there were approximately 66,000 participants eligible for the survey.

Host Agency Contacts - Host agencies are public agencies, units of government, and non-profit agencies that provide subsidized employment, training, and related services to SCSEP participants. All host agencies that are active at the time of the survey or that have been active in the preceding 12 months are eligible for inclusion in the sample of records. In PY 2014, there were approximately 30,000 host agencies eligible for the survey.

2. Sample Size and Procedures

The national and state grantees have parallel, but complementary procedures. The national grantees have a target of 370 at the first stage of sampling and at the second stage, a target of 70 for each state in which the grantee provides services. The state grantees have only one stage to their sampling procedure. It is anticipated that a sample of 370 will yield 222 completed interviews at a 60 percent response rate. Following the sampling procedure, for each state grantee, 222 completed surveys should be obtained each year for both participants and host agencies. At least 222 completed surveys for both customer groups will be obtained for each national grantee, depending on the number of states in which each national grantee is operating. In cases where the number eligible for the survey is small and 222 completed interviews are not attainable 9a situation common to state grantees), the sample includes all participants or host agencies. The surveys of participants and host agencies are conducted through a mail house once each program year.

The actual number of participants sampled in PY 2013 was 21,361. There were 12,804 returned surveys that had answers to all three ACSI questions, for a response rate of 59.9%. 13,722 host agencies were surveyed in PY 2013. There were 8077 returned surveys, for a response rate of 58.9%. For both surveys, all participants and host agencies that were active within the 12 months prior to the drawing of the samples were eligible to be surveyed.

The attached response rate tables for the PY 2013 participant and host agency surveys show the total sample and the number of returned surveys that had answers to all three ACSI questions.

The number of actual responses as set forth in Section 3 below differs from the number expected by the sampling procedure because the large national grantees operate in many states and sampling 70 from each of those states yields far more than the minimum sample of 370 would yield. At the same time, most state grantees do not have sufficient participants or host agencies to permit sampling, so all participants and host agencies are surveyed.

3. Response Rates

Response rates achieved for the participant and host agency surveys since 2004 have ranged from 56 percent to 70 percent. Participant and host agency response rates for PY 2013 are slightly below 60 percent.

We have received a total of 200,000 responses since PY 2004, 132,281 from the participant survey and 64,154 from the host agency survey. Below is a table of responses and response rates. (Note: There were no surveys for PY 2007, and in PY 2011 there was no host agency survey.)

Participant ACSI Response Rate

-

PY04

PY05

PY06

PY07

PY08

PY09

PY10

PY11

PY12

PY13

Response Rate

69.2%

62.9%

61.7%

56.1%

63.8%

62.7%

60.6%

63.9%

59.9%

# of Responses

15,662

15,806

14,317

13,522

15,535

15,969

14,822

13,844

12,804

Host Agency ACSI Response Rate

-

PY04

PY05

PY06

PY07

PY08

PY09

PY10

PY11

PY12

PY13

Response Rate

69.8%

67.9%

64.5%

62.3%

64.6%

60.4%

62.3%

58.9%

# of Responses

10,655

10,314

10,679

10,569

11,331

10,606

9,345

8,077

B. Employer Surveys

1. Who Is Surveyed

Employers that hire SCSEP participants and employ them in unsubsidized jobs are included in the Employer Survey. To be considered eligible for the survey, the employer: 1) must not have served as a host agency in the past 12 months; and 2) must have had substantial contact with the sub-grantee in connection with hiring of the participant; and 3) must not have received another survey from this program during the current program year.

All employers that meet the three criteria described above are surveyed at the time the sub-grantee conducts the first case management follow-up, which typically occurs 30-45 days after the date of placement.

2. Sample Size and Procedures

All employers meeting the three criteria described above will be surveyed. No sampling is used.

For the employer surveys, all qualified employers are surveyed because the number of qualified employers is relatively low. Employers qualify for the survey if they did not also serve as host agencies, if the grantee was directly involved in making the placement, and the employer was aware of the grantee’s involvement. Employers are only surveyed for the first hire they make in each 12 month period. The number of qualified employers in any given program year is estimated to be approximately 1650. That should yield 1000-1100 returned surveys. Although there are many more placements than 1650, roughly 30% of them are with host agencies, and most of the remainder were self-placements by the participant. In 2013, there were 552 returned employer surveys. It was not possible to calculate a response rate because of grantee non-compliance with the procedures for survey administration. New procedures, a new management report, and new edits to the data collection system are expected to improve compliance with survey administration and result in an increase in employers surveyed.

3. Response Rates

Response rates for the employer survey have been difficult to track because of grantee non-compliance with the requirement to enter the survey number and date of mailing into the SPARQ system. Where the survey administration requirements have been followed, employer response rates have been very high. To improve compliance with the procedures, several changes have recently been made to the administration of the employer survey. The changes include: 1) a new management report that will tell grantees when each qualified employer needs to be surveyed; 2) a new edit in the SPARQ data collection system that will warn grantees when they have failed to deliver surveys as required; and 3) new edits that will prevent the entry of incomplete information about the survey and date of delivery into the data collection system. As a result of these changes, we expect greatly increased compliance with the survey administration and an increase in the numbers of employers surveyed.

2. Describe the procedures for the collection, including: the statistical methodology for stratification and sample selection; the estimation procedure; the degree of accuracy needed for the purpose described in the justification; any unusual problems requiring specialized sampling procedures; and any use of periodic (less frequent than annual) data collection cycles to reduce burden.

A random sample is drawn annually from all participants and host agencies that were active at any time during the prior 12 months. The data come from the SPARQ data collection and reporting system.

A. Design Parameters:

There are 16 national grantees operating in 49 states and territories

There are 56 state/territorial grantees

There are three customer groups to be surveyed (participants, host agencies, and employers)

Surveying each of these customer groups should be considered a separate survey effort.

The major difference between the three groups is the number of participants served in any given year by the national, state, and territorial grantees. Using the sampling from PY 2013, 12 of the 16 national grantees had sufficient participants to provide a sample of 370. Only 7 state grantees had sufficient participants to be able to provide a sample of 370. In regard to the host agency sampling, 8 national grantees had sufficient numbers of host agencies to allow a sample of 370. None of the state grantees had a sufficient number of host agencies to permit sampling. Where sampling is not possible, all participants or host agencies are surveyed. None of the territories had sufficient numbers for the participant or host agency sampling. In fact, only Puerto Rico participated in the surveys. American Samoa, Guam, the Northern Mariana Islands and the Virgin Islands did not participate due to the inability to conduct time-sensitive mail surveys overseas. The methodology requires tight turnaround times for three waves of surveys.

Participant Surveys:

A point estimate for the ACSI score is required for each national grantee, both in the aggregate and for each state in which the national grantee is operating. The calculations of the ACSI are made using the formulas presented in Section 4, page 15, near the end of this document.

A point estimate for the ACSI score is required for each state grantee.

A sample of 370 participants from each national and state grantee will be drawn from the pool of participants who are currently active or have exited the program during 12 months prior to the survey period.

Some state grantees may not have a total of 370 participants available to be surveyed. In those cases, all participants who are active or who have exited during the 12 months prior to the survey will be surveyed.

As indicated above, 370 participants will be sampled from each national grantee. With an expected response rate of 60 percent, this should yield 222 usable responses. However, a single grantee sample may not be distributed equally across the states in which a national grantee operates. We, therefore, aim for a sample of 70 in each state, with a potential of 42 responses. Where there are fewer than 70 potential respondents in the sample for a state, we select all participants. If the overall sample for a national grantee is less than 370 and there are additional participants in some states who have not been sampled, we will over-sample to bring the sample to 370 and the potential responses to at least 222. To determine the impact on the standard deviation of the ACSI for differing sample sizes, a series of samples was drawn from existing participant data. The samples were drawn using the random sampling function within SPSS Version 19 that uses a random numbers table to draw each sample. The function allows the analyst to choose the number of records to be sampled for each run. The average standard deviation for samples of 250 was 20.6 in PY2013. The average standard deviation for samples of 50 was 18.6 for the same year. The analysis of standard deviations was used to provide greater confidence that grantees with smaller numbers of participants or host agencies would not have significantly different variability. We did not routinely calculate effect sizes (Cohen’s d or other variations), since we do not calculate differences between the ACSI scores of the different grantees using either t-tests or ANOVA.

Host Agency Surveys:

A point estimate for the ACSI score is required for each national grantee, both in the aggregate and for each state in which the national grantee is operating.

A point estimate for the ACSI score is required for each state grantee.

A sample of 370 host agency contacts from each national and state grantee will be drawn from the pool of agencies hosting participants during 12 months prior to the survey period.

Some state grantees may not have a total of 370 host agency contacts available to be surveyed. In those cases, all agencies hosting participants during the 12 months prior to the survey will be surveyed.

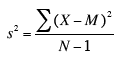

As indicated above, 370 host agencies will be sampled from each national grantee. With an expected response rate of 60 percent, this should yield 222 usable responses. However, a single grantee sample may not be distributed equally across the states in which a national grantee operates. We, therefore aim for a sample of 70 in each state, with a potential of 42 responses. Where there are fewer than 70 potential respondents in the sample for a state, we select all host agencies. If the overall sample for a national grantees is less than 370 and there are additional host agencies in some states that have not been sampled, we will over-sample in those states to bring the sample to 370 and the potential responses to at least 222. To determine the impact of different sample sizes on standard deviations, a series of samples was drawn from existing host agency data. The samples were drawn using the random sampling function (Data, Select Cases, Random sample of Cases) within SPSS Version 19. The procedure for 250 and 50 were each run several times, since the statistician observed that different random runs of each quantity of records yielded slightly different standard deviations. The standard deviations of the different runs of each sample size were than averaged. The average standard deviation for samples of 250 was 20.8 for PY2013. The average standard deviation for samples of 50 was 22.8 for the same year. The standard deviation formula used in SPSS is

The reason that the number surveyed is as high as it is stems from the fact that the sampling for grantees with large number of participants and host agencies is a two stage sample. The first stage seeks a total grantee sample of 370. The second stage seeks to ensure that, where possible, 70 participants or host agencies from each state served by a national grantee are included in order to provide data from all states served without unduly increasing the overall sample size. Since much of our customers’ experience is determined by local management of the program, surveying each state captures that dimension of the customer experience. The oversampling at this second stage also contributes to large numbers being surveyed among those national grantees that serve participants in many different states. National grantees operate in as few as two states and as many as 31 states.

For the employer surveys, all qualified employers are surveyed because the number of qualified employers is relatively low. Employers are only surveyed if they did not also serve as host agencies and if the grantee was involved in making the placement and the employer was aware of the grantee’s involvement. Employers are only surveyed for the first hire they make in each 12 month period. The number of qualified employers in any given program year is estimated to be approximately 1650. In 2013, there were 552 returned employer surveys. It was not possible to calculate a response rate because of grantee non-compliance with the procedures for survey administration. New procedures, a management report, and new edits to the data collection system are expected to improve compliance with survey administration and result in an increase in employers surveyed.

Employer Surveys:

All qualified employers are surveyed. There is no sampling. To be considered eligible for the survey, the employer: 1) must not have served as a host agency in the past 12 months; and 2) must have had substantial contact with the sub-grantee in connection with hiring of the participant; and 3) must not have received another survey from this program during the current program year.

Qualified employers are surveyed following the first time they hire a participant in the program year. The surveys are delivered by the sub-grantees at the time the sub-grantees conduct the first case management follow-up, which typically occurs 30-45 days after the date of hire.

Once an employer has been surveyed, the employer will be surveyed again when it has another placement and at least one year has passed since the last survey.

The statute includes the surveys of all three customer groups as additional measures of performance for SCSEP that must be reported each year. Survey procedures ensure that no customer is surveyed more than once each year. Less frequent data collection would require a change in the law.

B. Degree of Accuracy Required

The 2000 amendments to the OAA designated customer satisfaction as one of the core SCSEP measures for which each grantee had negotiated goals and for which sanctions could be applied. In the first year of the surveys, PY 2004, baseline data were collected. The following year, PY 2005, was the first year when evaluation and sanctions were possible. Because of changes made by the 2006 amendments to the OAA, starting with PY 2007, the customer satisfaction measures have become additional measures (rather than core measures), for which there are no goals and, hence, no sanctions.

When the surveys were originally approved by OMB in 2004, a target response rate of 70 percent was appropriate because of the required use of the surveys for goal setting and sanctions. Since July 1, 2007, however, the surveys are used for program improvement and a lower degree of accuracy should be acceptable for this purpose. There has been widespread “survey fatigue” throughout the country, and SCSEP has not been immune. We have, therefore, set 60 percent as the target response rate.

In regard to drawing a larger sample, the decision to use 370 as the target sample size is based on cost and practicality. Any larger target would increase the survey costs and make those costs disproportionate to the size of the program budget. In addition, as noted in Section 2A, there are very few grantees that can provide a larger sample.

Two major studies substantiate the appropriateness of a 60 percent response rate goal. A study by the American Association for Public Opinion Research (AAPOR) has identified a 60 percent response rate as a minimum standard for studies that can be published in key journals. Kiess & Bloomquist (1985) determined that 60 percent response rate was sufficient to avoid bias by most happy/least happy respondents. Don Dillman (1974, 2000) has continued to support a 70 percent rate. We do follow the mail procedures outlined by Dillman (see Section 3.A.), but we do not believe the current environment makes a 70 percent response rate realistic. Moreover, the statisticians at the Department of Statistics at the University of Connecticut, did not find evidence of non-response bias at that level. (see Section 3.B)

From 2000-2006, the customer satisfaction surveys were part of the core measures required by Congress to assess grantee performance. The stakes were high because grantees that failed to meet their negotiated goals for the core measures in the aggregate were subject to sanctions, including potential loss of their grants. For that reason, a 70 percent response rate may have been appropriate. Those stakes were lowered when the 2006 amendments to the Older Americans Act (OAA) made the surveys additional measures for which there are no goals and no sanctions. A 60 percent response rate is adequate for providing grantees reliable information for program improvement and for providing accountability to Congress and the public.

3. Describe the methods used to maximize response rates and to deal with nonresponse. The accuracy and reliability of the information collected must be shown to be adequate for the intended uses. For collections based on sampling, a special justification must be provided if they will not yield "reliable" data that can be generalized to the universe studied.

A. Maximizing Response Rates

Participant and Host Agency Surveys:

The responses are obtained using a uniform mail methodology. The rationale for using mail surveys includes: individuals and organizations that have a substantial relationship with program operators, in this case, with the SCSEP sub-grantees, are highly likely to respond to a mail survey; mail surveys are less expensive when compared to other approaches; and mail surveys are easily and reliably administered to potential respondents. The experience in administering the surveys by mail since 2004 has established the efficacy of this approach. Use of the Internet for conducting the host agency surveys has proven to be impracticable at this time because an insufficient number of host agency contact persons have reliable e-mail addresses.

As with other data collected on the receipt of services, the responses to the customer satisfaction surveys must be held private as required by applicable state law. Before promising respondents privacy of results, grantees must ensure that they have legal authority under state law for that promise.

To ensure ACSI results are collected in a consistent and uniform manner, the following standard procedures are used by grantees to obtain participant and host agency customer satisfaction information:

ETA’s survey research contractor, The Charter Oak Group, determines the samples based on data in the SCSEP Performance and Results QPR (SPARQ) system. As with WIA, there are smaller grantees where 370 potential respondents will not be achievable. In such cases, no sampling takes place and the entire population is surveyed. See the Design Parameters in Section 2 above for details.

Grantees are required to ensure that sub-grantees notify customers of the customer satisfaction survey and the potential for being selected for the survey. Sub-grantees are required to:

Inform participants at the time of enrollment and exit.

Inform host agencies at the time of assignment of a participant.

Mail a customized version of a standard letter prepared by the Charter Oak Group to all participants selected for the survey that they will be receiving a survey in approximately one week.

When discussing the surveys with participants for any of the above reasons, refresh contact information, including mailing address.

Grantees are required to ensure that sub-grantees prepare and send pre-survey letters to those participants selected for the survey.

Grantees provide the participant sample list to sub-grantees about 3 weeks prior to the date of the mailing of the surveys.

Letters are personalized using a mail merge function and a standard text.

Each letter is printed on the sub-grantee’s letterhead and signed in blue ink by the sub-grantee’s director to provide the appearance of a personal signature.

Grantees are responsible for the following activities:

Provide letterhead, signatures, and correct return address information to DOL for use in the survey cover letters and mailing envelopes.

Send participant sample to sub-grantees with instructions on preparing and mailing pre-survey letters.

Contractors to the Department of Labor are responsible for the following activities:

Provide sub-grantees with list of participants to receive pre-survey letters.

Print personalized cover letters for first mailing of survey. Each letter is printed on the grantee’s letterhead and signed in blue ink with the signatory’s electronic signature.

Generate mailing envelopes with appropriate grantee return addresses.

Generate survey instruments with bar codes and preprinted survey numbers.

Enter preprinted survey numbers for each customer into worksheet.

Assemble survey mailing packets: cover letter, survey, pre-paid reply envelope, and stamped mailing envelope.

Mail surveys on designated day. Enter date of mailing into worksheet.

Send survey worksheet to the Charter Oak Group.

From list of customers who responded to first mailing, generate list for second mailing.

Print second cover letter with standard text (different text from the first letter). Letters are personalized as in the first mailing.

Enter preprinted survey number into worksheet for each customer to receive second mailing.

Assemble second mailing packets: cover letter, survey, pre-paid reply envelope, and stamped mailing envelope.

Mail surveys on designated day. Enter date of mailing into worksheet.

Send survey worksheet to the Charter Oak Group.

Repeat tasks above if third mailing is required.

Employer surveys:

Grantees are required to ensure that sub-grantees notify employers of the customer satisfaction survey and the potential for being selected for the survey. Employers should be informed at the time of placement of the participant.

Grantees and sub-grantees are responsible for the following activities:

Sub-grantee uses the new management report to identify an employer for surveying the first time there is a placement with that particular employer in the program year. Employer is selected only if it is not also a host agency and the sub-grantee has had substantial communication with the employer in connection with the placement. Each employer is surveyed only once each year. A management report in SPARQ provides grantees and sub-grantees a list of all qualified employers that should receive the survey.

Sub-grantee generates customized cover letter using standard text.

Sub-grantee hand-delivers survey packet (cover letter, survey, stamped reply envelope) to employer contact in person at time of first follow-up (Follow-up 1). Mail may be used if in-person delivery is not practical.

Sub-grantee enters pre-printed survey number and date of delivering packet into SPARQ database. (Survey instruments with pre-printed bar codes and survey numbers are provided to the grantees by DOL.)

A contractor, responsible for processing the surveys, sends weekly e-mail to all grantees and sub-grantees listing the survey numbers of all employer surveys that have been completed.

Sub-grantee reviews e-mails for three weeks following the delivery of the survey to determine if survey was completed.

If the contractor lists a survey number in the weekly email, the sub-grantee updates database with date. If survey not received, sub-grantee calls employer contact and uses an appropriate script to either encourage completion of the first survey (if the employer still has it) or indicate that the sub-grantee will send another survey for completion.

If needed, sub-grantee generates second cover letter using same procedures as for first cover letter.

Sub-grantee follows procedures as for first survey.

If survey received, sub-grantee updates database with date.

If third mailing needed, sub-grantee repeats steps above.

Grantee monitors process to make sure that all appropriate steps have been followed and to advise sub-grantee if third effort at obtaining completed survey is required.

B. Nonresponse Bias

The potential problem of missing data was a concern; even with the relatively high response rates presented in Section 1, non-response bias still must be addressed. A study of non-response bias and the impact of missing data was conducted at the University of Connecticut Statistics Department in 2013-2014.

The objective was to obtain bias-adjusted point estimates of:

Overall ACSI score

ACSI score for each national grantee

ACSI score for each state grantee

The author of the study used two different modeling approaches to determine the degree to which nonresponse biased the ACSI results. Below are the predicted nationwide ACSI scores by each of the models and without any adjustment:

No adjustment: 82.40

Ordinal Logistic adjustment: 85.58

Multinomial Logistic adjustment: 84.73

Although the ordinal and multinomial logistic adjustments use different methodologies for estimating non-response bias, their estimates are within three-quarters of a point of each other. While they are both regression models, the major distinction is that the multinomial method assumes independence of choices and the ordinal method does not. As the statistician notes in his report: “Both of the regression models considered have assumptions that are difficult to validate in practice. However, the good news is that since the primary objective is score prediction (and not interpretation of regression coefficients), the models considered are quite robust to departures from ideal conditions.”

The fact that the scores change very little indicates that the non-respondents’ satisfaction is very similar to that of those who responded to the survey.1 Adjusting for nonresponse would slightly increase the scores of some but not all grantees. Given the relatively minor impact of nonresponse, we consider the adjustments unnecessary especially because the customer satisfaction scores are no longer a part of the formal assessment of grantee performance (based on changes to the statute in 2006) and are being used as to guide program improvement.

4. Describe any tests of procedures or methods to be undertaken. Tests are encouraged as effective means to refine collections, but if ten or more test respondents are involved OMB must give prior approval.

The core questions that yield the single required measure for each of the three surveys are from the ACSI and cannot be modified. The supplemental questions included in this submission have been revised for the first time since OMB approved them in 2004. The revision is based on three factors:

Is the question a strong driver of the ACSI index as determined by: a) a high correlation with and independent contribution to the ACSI as determined through regression equations; or b) the strength of the relationship with the ACSI using a means test of the ACSI scores associated with each rating (e.g., Average ACSI for those rating their outlook on life as “Much more negative” is 64 while those rating their outlook as “Much more positive” is 90, a 26 point gap).

Does the question ask something of value for the administration or management of the program that is not otherwise available? This includes questions that relate to program requirements.

Is the question redundant with another question or set of questions? For example, the question whether the customer would recommend SCSEP has always been used in all of the surveys. It has had a consistently high score and duplicates information provided more reliably by the ACSI index.

The revised supplemental questions also respond to customer feedback over the last 10 years of survey administration, a review of responses to the questions over that time, changes in the law and program priorities, and feedback from the grantees. The revised surveys are shorter than the prior versions. Survey length has not been observed to be a burden or a deterrent to completion of the surveys.

The ACSI model (including the weighting methodology) is well documented. (See http://www.theacsi.org/about-acsi/the-science-of-customer-satisfaction ) The ACSI scores represent the weighted sum of the three ACSI questions’ values, which are transformed into 0 to 100 scale value. The weights are applied to each of the three questions to account for differences in the characteristics of the state’s customer groups.

For example, assume the mean values of three ACSI questions for a state are:

1. Overall Satisfaction = 8.3

2. Met Expectations = 7.9

3. Compared to Ideal = 7.0

These mean values from raw data must first be transformed to the value on a 0 to 100 scale. This is done by subtracting 1 from these mean values, dividing the results by 9 (which is the value of range of a 1 to 10 raw data scale), and multiplying the whole by 100:

1. Overall Satisfaction = (8.3 -1)/9 x 100 = 81.1

2. Met Expectations = (7.9 -1)/9 x 100 = 76.7

3. Compared to Ideal = (7.0 -1)/9 x 100 = 66.7

The ACSI score is calculated as the weighted averages of these values. Assuming the weights for the example state are 0.3804, 0.3247 and 0.2949 for questions 1, 2 and 3, respectively, the ACSI score for the state would be calculated as follows:

(0.3804 x 81.1) + (0.3247 x 76.7) + (0.2949 x 66.7) = 75.4

Weights were calculated by a statistical algorithm to minimize measurement error or random survey noise that exists in all survey data. State-specific weights are calculated using the relative distribution of ACSI respondent data for non-regulatory Federal agencies previously collected and analyzed by CFI and the University of Michigan.

Specific weighting factors have been developed for each state. New weighting factors are published annually. It should be noted that the national grantees have different weights applied depending on the states in which their sub-grantees’ respondents are located.

5. Provide the name and telephone number of individuals consulted on the statistical aspects of the design, and the name of the agency unit, contractor(s), grantee(s), or other person(s) who will actually collect and/or analyze the information for the agency.

The Charter Oak Group, LLC:

Barry A. Goff, Ph.D., (860) 659-8743, [email protected]; Bennett Pudlin, J.D., (860) 324-3555, [email protected]

Barry Goff was consulted on the statistical aspects of the design; Barry Goff and Bennett Pudlin will collect the information.

Ved Deshpande, Department of Statistics, University of Connecticut, (860) 486-3414, [email protected], was also consulted on the statistical aspects of the design.

1 Ved Deshpande, Department of Statistics, University of Connecticut, “Bias-adjusted Modeling of ACSI scores for SCSEP” (2014)

| File Type | application/msword |

| File Modified | 2015-03-23 |

| File Created | 2015-03-23 |

© 2026 OMB.report | Privacy Policy