RELSW 5.1.7.5 EAF OMB Supporting Statement Part B

RELSW 5.1.7.5 EAF OMB Supporting Statement Part B.docx

Regional Educational Laboratory (REL) Southwest Effective Advising Framework Evaluation

OMB:

Regional Educational Laboratory (REL) Southwest Effective Advising Framework Evaluation

SUPPORTING STATEMENT

FOR PAPERWORK REDUCTION ACT SUBMISSION

PART B: Collection of Information Employing Statistical Methods

July 2024

Submitted by:

National Center for Education Evaluation and Regional Assistance (NCEE)

Institute of Education Sciences (IES)

U.S. Department of Education

Washington, DC

OMB Number: 1850-NEW

Revised July 9, 2024

Table of Contents

Description of the Effective Advising Framework 1

Research questions for this study 2

B1. Respondent universe and sample design 3

Other data collection activities 5

B2. Information collection procedures 5

a. Notification of the sample and recruitment 5

b. Statistical methodology for stratification and sample selection 6

c. Degree of accuracy needed for the purpose described in the justification 13

d. Unusual problems requiring specialized sampling procedures 13

e. Any use of periodic (less frequent than annual) data collection cycles to reduce burden 13

B3. Methods to maximize responses 13

Overview

The U.S. Department of Education (ED), through its Institute of Education Sciences (IES), requests clearance for the recruitment materials and data collection protocols under the Office of Management and Budget (OMB) clearance agreement (OMB Number 1850-NEW) for activities related to the Regional Educational Laboratory (REL) Southwest program under contract 91990023C0003.

By 2030, the Texas Higher Education Coordinating Board (THECB) expects that 60 percent or more of all new jobs in Texas will require some postsecondary education. However, in 2019, less than half of the Texas population aged 25–34 years (44.3 percent) had some type of postsecondary credential (THECB, 2021). To close this gap and support districts in meeting the state statute that requires schools to fully develop each student’s academic, career, personal, and social abilities (Texas Education Code §33.006), the Counseling, Advising, and Student Supports team (under the Division of College, Career, and Military Preparation) at the Texas Education Agency (TEA) established the Effective Advising Framework (EAF) in 2020/21. This framework expands access to effective college and career advising by streamlining and modernizing advising offerings and services for secondary and postsecondary students. The initiative aims to support students in making informed decisions about postsecondary education and careers and to offer professional development to educators and guidance counselors on advising services.

The REL Southwest developed a partnership with TEA’s Division of College, Career, and Military Preparation to inform the development of resources that meet identified needs and implement additional changes to improve the EAF. Therefore, the REL Southwest is requesting clearance to conduct a study that will examine the implementation of the EAF across the three cohorts of regional education service centers (ESCs) and school districts that are currently participating in the EAF pilot grant program. The pilot program supports the development and implementation of an individual student planning system within the context of a comprehensive school counseling program. Participation in the pilot program was determined through grant application processes from TEA to the ESCs. The ESCs selected to receive grants were required to partner with two to four school districts within their geographic region. Because it is expected that districts are implementing the EAF in a variety of ways, this study will provide an in-depth look at the variation in implementation across districts, including an analysis of the factors that support or hinder implementation. The study will serve as an evaluation of progress toward the partnership’s medium-term goals that districts will use materials, tools, and resources based on effective or promising practices to conduct individual student planning; require Individual Career and Academic Plans (ICAP) for all students by grade 8; and increase districts’ ability to determine whether students are on track for postsecondary readiness by applying grade-level indicators of progress.

Description of the Effective Advising Framework

The EAF is designed to enhance both the quality and quantity of advising support that students experience throughout their time in K–12. The EAF outlines five “levers” of effective advising practices on which school districts are encouraged to base their plans to improve the way they prepare students for success after high school. Lever 1 focuses on building strong program leadership and planning. Lever 2 aims to provide school counselors and advisors with the resources they need to be effective. Lever 3 is about promoting a culture of advising within schools that engages more personnel than just counseling staff in coordinated efforts to support students in meeting grade-level expectations. Lever 4 focuses on establishing effective external partnerships to fill what would otherwise be gaps in the capacity of schools to promote postsecondary readiness. Finally, Lever 5 concerns the selection and use of high-quality advising materials and assessments to enhance student preparation for life outside of K–12 schooling.

The purpose of the EAF is to systematically support districts and schools in effective advising efforts with the ultimate goal of increasing college, career, and military readiness (CCMR) rates. TEA uses the acronym “CCMR” to make explicit the inclusion of military readiness along with college and career readiness. Policymakers and researchers more frequently use “college and career readiness” to describe students’ postsecondary preparation. Definitions of college and career readiness have been used by educators and policymakers when developing new initiatives, programs, or materials and can be used to monitor student outcomes (Byrd & Macdonald, 2005; Mishkind, 2014; Tierney & Sablan, 2014). Definitions of college and career readiness, circulated by researchers and policymakers, vary in length but emphasize a consistent set of skills, including academic content knowledge, critical thinking, communication and community-building skills, and self-management skills (Camara, 2013; Conely, 2008; Mishkind, 2014; Sampson et al., 2011). College readiness and career readiness are often conceptualized as the same thing, but there are meaningful differences between these two approaches to postsecondary readiness (Pérusse et al., 2017).

Research questions for this study

The REL Southwest is serving as the evaluator of the Effective Evaluation Framework. The research questions (RQs) for this applied research study are as follows:

How are districts and schools using the Effective Advising Framework (EAF) to enact district implementation plans?

What approaches and strategies are districts using to apply the EAF? How do approaches and strategies differ by district size and locale?

What roles are district and school staff playing in implementing the EAF?

What materials and resources are districts and schools using, and how?

How and to what extent have districts and schools planned and implemented an individual planning system as outlined in the EAF?

How are districts using the EAF to align advising practices across grade levels?

To what extent are districts aware of and have goals to increase their CCMR rates? What efforts are districts making to increase their CCMR rates?

What factors inhibit or facilitate successful implementation of the EAF at the district and school levels?

Which structures and practices by district implementation steering committees support successful implementation of the EAF?

To what extent are school staff aware of district EAF implementation plans? To what extent do school staff understand and effectively carry out the implementation plans?

To what extent are student-facing school staff trained on the EAF?

What are participating districts’ CCMR rates, and how have these rates changed over time?

How do CCMR rates differ by student gender, race/ethnicity, socioeconomic status, and English proficiency status as well as school size and locale?

B1. Respondent universe and sample design

The REL Southwest plans to collect quantitative and qualitative data in the 2024/25 school year from the 12 ESCs and 34 of the 52 districts that received TEA’s EAF implementation grants between 2021/22 and 2023/24. Due to the time and effort that will be required to secure district approval to conduct the study and the likelihood that some districts will choose not to participate in the study, we set 34 total districts as a more reasonable target for our study sample than the total 52 districts involved in the pilot. We will use the term “cohort” to identify school districts based on the number of years each district has been implementing the EAF. Currently, a total of three cohorts of districts are participating in the EAF pilot program, including districts in their first, second, or third year of implementation (table 1). It is important to note that some EAF coaches will be providing support for districts in more than one cohort.

Table 1. Participating Effective Advising Framework districts, disaggregated by cohort

Cohort |

Number of districts |

First implementation year |

Number of implementation years at start of data collection (2024/25) |

Cohort 1 |

11 |

2021/22 |

3 |

Cohort 2 |

18 |

2022/23 |

2 |

Cohort 3 |

23 |

2023/24 |

1 |

Recruitment of districts

The REL Southwest researchers will contact the 12 EAF coaches from the 12 ESCs and invite them to participate. The researchers will confirm with each coach which districts and district project leads they support. The REL Southwest will then solicit participation in the project from all 52 districts that received an implementation or planning grant. To do this, the research team will contact each district EAF project lead via personalized emails that state the purpose of this research, that it is supported by TEA and IES, and that participation is voluntary. We will then follow up as needed until we have recruited a representative sample that includes 34 of the 52 districts participating in the EAF pilot. The REL Southwest team will use TEA’s district type categorization to maximize the regional variation of districts represented in our sample. District type, as defined by TEA, is an eight-category variable that ranges from “major urban” and “major suburban” to “non-metropolitan: fast growing” and “rural.” To the extent possible, we will endeavor to recruit districts from all eight categories. This amounts to a purposive sampling of districts aimed at maximizing variation in district type. We will complete any research application procedures required by districts before recruiting participants or collecting data. Once these requirements have been met, we will reach out to the respective district EAF project leads and inform them of the timeline and process of data collection.

Extant data on school staff

After securing district commitment to participate and research approval (if required), we will recruit participants for individual data collection efforts. We will rely on cooperation from each participating district to send us lists of names and contact information for principals, assistant/vice principals, and counselors. The data they share with us will allow us to contact school staff to elicit participation in the surveys and to contact select staff to participate in the interviews and focus groups.

Surveys

To answer RQs 1 and 2, the REL Southwest will administer online surveys in the spring of 2025 to all EAF coaches, district project leads, and school staff (school administrators and counselors). Because the surveys will be designed in such a way that they can be completed by individuals regardless of where their district is in the implementation process, all three cohorts will receive the same surveys at the same time. To collect survey data, the study team will electronically deliver a survey form to potential participants via Qualtrics. The content will remain largely constant across the three surveys; however, we will alter the text of each question so that the wording is appropriate for each respondent group (EAF coaches, district project leads, and school staff). We expect that this survey distribution will involve eliciting survey responses from 12 EAF coaches, 34 district project leads, and 1,397 school staff.

One-on-one interviews

After the survey administration is complete, we will recruit a subset of EAF coaches and district project leads to participate in virtual, one-on-one interviews with members of our research team. We will select eight EAF coaches to participate in interviews. Our team will then select 16 district project leads based on district characteristics, using a purposive sampling method. Specifically, we will begin by selecting districts from each TEA district type category. With the goal of selecting a sample that comprises a wide array of districts, we will identify districts that represent a range in the following categories: EAF cohort, geographic region, percentage of students identified as economically disadvantaged, percentage identified as emergent bilingual/English learner students, percentage of students who are Black, percentage of students who are Hispanic or Latino/a, and district locale type (rural, urban, or suburban). This diversity is important for the current study because it will help ensure that findings are not unduly influenced by certain types of districts. If an ESC or district declines to participate in qualitative data collection, we will select an ESC or district that is as similar as possible to replace it. We will repeat this process until we have a target sample of data collection participants. EAF coach interviews will take place in September 2025, and district project lead interviews will subsequently be conducted in October and November 2025.

Focus groups

Approximately 64 school staff members will be recruited to participate in the focus groups, with the goal of including about four members representing at least two schools from each of the 16 districts participating in the one-on-one interviews. We will work in consultation with the district project leads to select school staff members who oversee advising efforts at the school (such as counselors and administrators) or work directly with students (such as teachers). Focus groups with school staff members will take place in January and February 2026.

Other data collection activities

To address RQ3, we will obtain quantitative administrative data from the Texas ERC, a clearinghouse of public education data collected by the state. These data are broadly available to researchers who complete an application process. The ERC contains data on all Texas school districts and is therefore not a sample. It also contains only archival data and thus is not a part of this clearance request. For this reason, table 2 describes the potential respondent universe for the data collection efforts for RQs 1 and 2 only.

Table 2. Sample sizes and response rates

Sample unit |

Sample size |

Response rate |

District* |

52 |

65% |

EAF coach surveys** |

12 |

100% |

District staff surveys** |

34 |

100% |

School staff surveys** |

1,644 |

85% |

EAF coach interviews** |

8 |

100% |

District staff interviews** |

16 |

100% |

School staff focus groups** |

128 |

50% |

* Recruitment

** Data collection activities

B2. Information collection procedures

a. Notification of the sample and recruitment

The administration of the EAF coach survey will be straightforward, because the contact information for all individuals will be provided to the REL Southwest by TEA. We will communicate with the EAF coaches to secure contact information for each of their district project leads. The REL Southwest team will send emails to each EAF coach and district project lead inviting them to take the respective survey by clicking on a personalized link.

To identify participants for the school staff survey, we will provide district project leads with contact list templates that outline how best to provide contact information for school administrators and counselors. Once project leads have sent us contact information, we will then send all relevant staff members a personalized email inviting them to take the survey. We will send regular reminders to each potential respondent who has neither completed the survey nor opted out of all future communications.

To recruit participants for the focus groups, we will work in consultation with the district project leads to select school staff members who oversee advising efforts at the schools (such as counselors and administrators) or work directly with students (such as teachers). Approximately 64 school staff members will be recruited to participate in the focus groups, with the goal of including about four members representing at least two schools from each of the 16 districts participating in the one-on-one interviews. b. Statistical methodology for stratification and sample selection

We will contact the population of EAF school districts and accept the first 34 districts that indicate their willingness to take part in data collection and fit our stratification parameters described in section B1. We will contact all school administrators and counselors in these 34 districts and invite them to participate in the survey. For the interviews, we will select 8 EAF coaches from the population of 12 EAF coaches, using a purposive sampling method. We will then purposively select 16 district leads to participate in district staff interviews. For the focus groups, we will recruit approximately 64 school staff members to participate in the focus groups, with the goal of including about 4 staff members representing at least two schools from each of the 16 districts participating in the one-on-one interviews. For both the interviews and the focus groups, in each instance where a sampled staff member declines to participate, we will replace them will a similar staff member and continue this process until we have the appropriate number of participants.

We will use the universe of data from the ERC and therefore will perform no sampling or stratification.

c. Estimation procedures

Overview

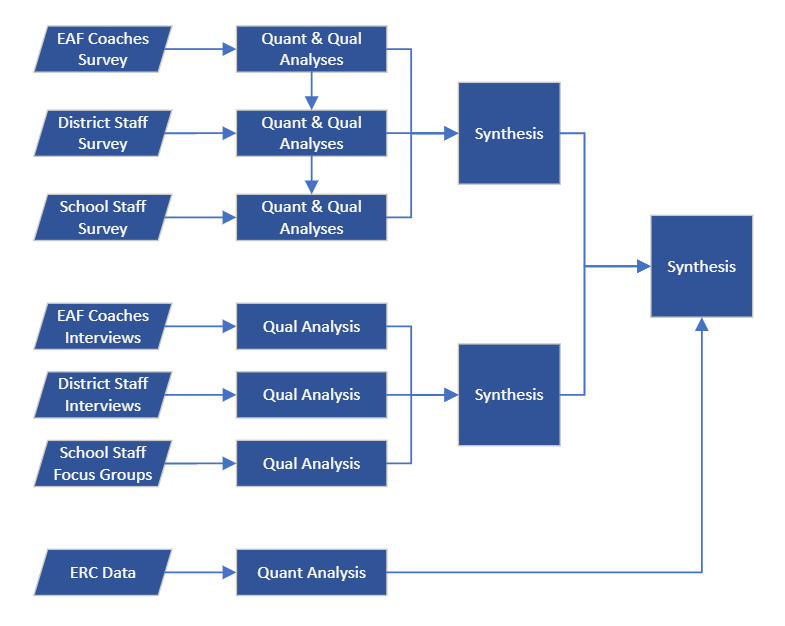

The REL Southwest will use a descriptive mixed methods approach to analyze the data, employing a concurrent triangulation design (Creswell et al., 2003) to provide an in-depth characterization of districts in terms of their implementation of the EAF and the preparation of their students for postsecondary success. Quantitative and qualitative data will be collected concurrently, analyzed separately, and then synthesized by comparing the results of each type of analysis and developing an overall set of findings that answer the RQs. A visual overview of the analysis design is in figure 1. In this figure, each arrow indicates how a data source or analysis in this study will enable, inform, or contribute to another part of the analyses. A description of how the data will be analyzed for each RQ is provided in the sections that follow.

Figure 1. Overview of data analyses

EAF

= Effective Advising Framework; ERC = education service center; Qual

= qualitative;

Quant = quantitative

Research Questions 1 and 2

Surveys

The REL Southwest researchers will download all survey data from the Qualtrics system once the survey window is closed and process close-ended survey responses (that is, those with Likert-scale or numerical-count responses) using statistical software. The first goal of this analysis is to provide overarching descriptive statistics from each of the three surveys that summarize survey respondents’ views as they relate to each RQ. In addition to these estimates of the views of all survey respondents, we also will disaggregate responses by district so that we can summarize the responses of school staff within each district and compare these estimates across districts. Attention will be given to how results may vary for different types of districts (for example, rural vs. urban, cohort 1 vs. cohort 2 vs. cohort 3).

Open-ended responses will be imported into Atlas.ti, and unique identifiers for survey participants will be preserved. This process will enable us to query the coded data by participant characteristics, such as school district demographics, size, or locale, to identify trends across short-answer responses. Two qualitative coders will use thematic analysis (Braun & Clarke, 2006) and a combination of inductive and deductive coding (Saldaña, 2021) to analyze participants’ responses. To establish intercoder reliability or agreement (O’Connor & Joffe, 2020), the two coders will collaboratively and iteratively review the dataset to unitize the survey responses and inductively develop a stable set of codes and themes. The qualitative analysis team will craft analytic memos for each open-ended survey item, documenting emerging themes, questions, and connections to findings from other open-ended survey items. The qualitative analysts will regularly engage in coding debriefs with other members of the study team, using analytic memos to guide the peer debriefing conversations. Finally, to support the intercoder reliability process, the team will create a codebook defining the inductively developed codes. This codebook will include the following for each code: a code definition and example and nonexample data excerpts for each code. This codebook will then be submitted as an appendix to the study. Once the coding scheme has been finalized, the qualitative analysis team will independently code a randomly selected 10 percent of survey responses using the intercoder agreement tool embedded in Atlas.ti. The two coders’ reliability will be evaluated using Krippendorff’s alpha to calculate reliability for each theme (Hayes & Krippendorff, 2007). When coders reach a minimum threshold of 0.80 for percentage of agreement, they will independently use the codebook to deductively analyze the remaining open-ended survey responses.

The REL Southwest will conduct the analyses of the survey results in a hierarchical manner across the three surveys so that findings at one level (for example, districts) can be used to inform the findings of the next level (for example, schools). In other words, we will first analyze the results of the EAF coach survey, followed by the district project lead survey, and finally the school staff survey. If any clear and substantial differences between EAF coaches emerge from the analysis of the EAF coach survey, we will consider whether those differences can be applied to our interpretation of the district survey results. For example, if the analysis of the EAF coach survey reveals that each EAF coach can be categorized into one of two distinct types of approaches to implementing the EAF, we might consider whether grouping districts according to the approach taken by their EAF coaches aids in interpreting the results of the district project lead survey.

Similarly, if results of the district staff survey show that districts can be grouped into distinct categories based on their implementation plans, we may consider disaggregating school staff survey results by these groups to determine whether schools vary across these district-level categories. For example, if the analysis of the district staff survey reveals that a third of the school districts implemented program leadership training for school administrators whereas the other two-thirds did not hold any leadership trainings, we might consider whether responses to the question about factors that support or hinder implementation differ for school staff members in districts with the leadership training versus those without such training.

In addition, overall results from similar items across all three surveys will be compared to understand how participants’ perceptions and implementation of the EAF differ across levels (such as EAF coaches, districts, and schools). Attention also will be given to differences between cohorts to understand how districts’ foci and efforts vary according to the length of time they have been implementing the EAF. This examination across cohorts also will allow us to consider how changes made to the EAF since the initial year of the initiative may have influenced the way districts approach implementation.

One-on-one and focus group interviews

Following the conclusion of each one-on-one and focus group interview, we will download the videoconferencing audio file and submit it electronically to a transcription service. The resulting transcriptions, when returned, will be reviewed for accuracy, added to our qualitative data inventory, and then stored in secure cloud storage. At the conclusion of qualitative data collection, the research team will create a project in Atlas.ti, the qualitative data analysis software, to conduct manual coding. Using Atlas.ti increases coding reliability and validity because it serves as a data organization tool, facilitates collaborative coding, automatically creates a coding audit trail, and enables the researchers to systematically query coded data and create multimodal analysis reports.

Once the qualitative dataset—including both interview and focus group transcripts—is imported into the qualitative data analysis software, the coders will take a grounded theory approach to code the data (Thornberg & Charmaz, 2014) and construct theories about the actions and processes described during data collection. Coders will analyze the data inductively and then deductively through coding cycles beginning with data collected from the EAF coaches. The coders will begin analyzing the data with line-by-line open coding to capture actions, processes, and relationships described by participants. Throughout the open-coding cycle, the qualitative analysts will engage in constant comparison to refine open codes and make connections across data. At the end of this coding cycle, qualitative researchers will collaboratively develop code categories by sorting and reducing codes into relevant groups. The qualitative analyst team will then write memos defining and describing the codes generated during open coding and present these to the larger research team. The research team, as a whole, will then identify which codes to center during the second round of coding, known as “focused coding.” During this second round of coding, the analysts will use the selected codes to identify, define, and synthesize main themes in the data (Charmaz, 2014). During this second round of coding, the analysts will categorize codes into concepts and refine their conceptual categories by working across the dataset to develop an understanding of processes and theoretical understandings of experiences captured in the dataset (Charmaz, 2014). Conceptual codes will be defined in memos.

The grounded theory coding process will be followed iteratively for the district project lead interviews and the school staff focus group. Codes developed during the inductive analysis of EAF coach interviews will be applied deductively to district interviews and staff focus groups to guide focused coding and category creation. By combining inductive and deductive approaches to coding, the research team will be able to explore high-priority research constructs and identify unanticipated themes that arise from participants’ self-reported experiences.

Throughout the qualitative data analysis process, researchers will craft analytic memos documenting emerging codes, categories, theories, themes, questions, and connections to other data sources in the applied research project. Primary coders will regularly engage in coding debriefs with other members of the study team, using analytic memos to guide the peer debriefing conversation. Finally, to support the coding process and document final analysis, the qualitative analysts will develop a series of codebooks that include a code definition for each code; examples and nonexamples of data excerpts for each code definition; code frequency counts; and tables disaggregating code counts by key characteristics such as EAF cohort, district size, and district locale.

Research Question 3

The REL Southwest will access secondary quantitative data on student demographics and CCMR indicators for the 52 EAF districts through the ERC and explore these data using a series of descriptive analyses. This will allow us to answer RQ3 and provide context for the findings of RQs 1 and 2. The research team will then use a descriptive summary of data for all districts in the state to provide some indication of the extent to which the findings may be generalizable. Demographic information will include data on gender, race/ethnicity, economic status, disability status, Section 504 status, and emergent bilingual/English learner student status. We also will examine the specific measures that TEA uses to assess CCMR and that are defined in the Comprehensive Texas Performance Reporting System Glossary (table 3).

Table 3. College and career/military readiness (CCMR) criteria as defined by the CCMR accountability and CCMR Outcomes Bonus systems in Texas

Standard |

Criteria category |

CCMR accountability system |

CCMR Outcomes Bonus system |

College ready |

Postsecondary enrollment |

|

Enroll in a postsecondary institution immediately following high school AND |

Associate’s degree |

Earn an associate’s degree before graduating from high school OR |

Earn an associate’s degree before graduating from high school OR |

|

Texas Success Initiative |

Meet assessment standards for math and ELA/reading on the TSIA, SAT, or ACT or earn credit for ELA and math college preparation courses OR |

Meet assessment standards for math and ELA/reading on the TSIA, SAT, or ACT or earn credit for ELA and math college preparation courses |

|

Dual credit |

Earn at least three college credit hours in ELA or math or nine hours in any subject OR |

|

|

AP/IB |

Score a 3 or higher on an AP exam or a 4 or higher on an IB exam OR |

|

|

OnRamps |

Earn 3 or more hours of college credit in any subject area |

|

|

Career or military ready |

Texas Success Initiative |

Meet assessment standards for math and ELA/reading on the TSIA, SAT, or ACT or earn credit for ELA and math college preparation courses OR |

Meet assessment standards for math and ELA/reading on the TSIA, SAT, or ACT or earn credit for ELA and math college preparation courses AND |

Industry-based certification |

Earn an industry-based certificate OR |

Earn an industry-based certificate OR |

|

Level I or II certificate |

Earn a level I or level II certificate in any workforce education area OR |

Earn a level I or level II certificate in any workforce education area |

|

IEP and workforce readiness |

Students with a disability only: Graduate with graduation code 04, 05, 54, or 55 OR |

|

|

Special education advanced diploma |

Students with a disability only: Graduate under an advanced diploma plan OR |

|

|

Armed forces |

Enlist in the military |

|

AP = Advanced Placement; ELA = English language arts; IB = International Baccalaureate; IEP = individualized education program; TSIA = Texas Success Initiative Assessment

Note: For each criterion listed in this table, high school graduation also is required to be considered college or career/military ready.

Table 3 provides an overview of the criteria for meeting CCMR according to two systems used by TEA to monitor postsecondary readiness. The CCMR accountability system set of criteria is used to define CCMR for the purpose of informing school and district accountability ratings. The CCMR Outcomes Bonus system set of criteria is used to measure CCMR for the purpose of determining school districts’ eligibility for additional funding under the CCMR Outcomes Bonus. Based on these measures and criteria categories, TEA calculates a number of different CCMR rates, including the following:

College, Career, or Military Ready: The percentage of annual graduates who meet the criteria for either college or career/military readiness

Only College Ready: The percentage of annual graduates who meet the criteria for college readiness but not career/military readiness

Only Career/Military Ready: The percentage of annual graduates who meet the criteria for college/military readiness but not college readiness

College Ready and Career/Military Ready: The percentage of annual graduates who meet the criteria for both college and career/military readiness

College Ready: The percentage of annual graduates who meet the criteria for college readiness

Career or Military Ready: The percentage of annual graduates who meet the criteria for career/military readiness

Most of the measures needed to calculate these rates are available in the ERC, but several are not. The REL Southwest will work with TEA to add the remaining variables provided in the foregoing list to the ERC. If we are unable to obtain these data, we will calculate the CCMR rates with only the data that are available.

The research team will compute CCMR rates for the districts participating in the study, including overall rates for the entire sample and rates for each individual district. We will calculate two sets of rates. One set will be computed using the criteria for the accountability system, and the other set will be calculated using the criteria for the outcomes bonus system. In addition, we will also calculate rates for each individual criterion listed in table 3 (that is, the percentage of graduates who earned an associate’s degree, the percentage of graduates who met the dual credit criterion, and so on). This process will be repeated for each school year where data are available, going back to the 2015/16 school year. These data will allow us to plot the trajectory of CCMR rates over time. Our analysis of CCMR rates across time will not allow us to make any inferences about the impact of implementation of the EAF on CCMR rates, but it will provide information on the historical context in which the EAF is being implemented. TEA decided to implement the EAF due to an expressed concern about suboptimal CCMR rates, so tracking those rates to a time before the EAF was established will reveal those trends that prompted TEA’s initial concerns. The external validity of our survey, interview, and focus group findings will likely only extend to districts with similar baseline CCMR rates or even only to districts with similar historical CCMR rate trends. Thus, our descriptive analysis of CCMR rates within the EAF pilot districts will inform understanding of the extent to which our qualitative findings can be generalized.

Moreover, the research team will calculate CCMR rates for groups of students based on race/ethnicity; gender; and indicators of economic disadvantage, emergent bilingual/English learner student status, and student disability status. They will make descriptive comparisons to understand how CCMR rates vary across student demographics. In addition to student characteristics, we will make comparisons of CCMR rates across district-level factors, such as district size (that is, number of students enrolled) and locale (rural, urban, or suburban).

Compiling these data and computing these metrics will require cleaning and merging multiple files across multiple years. All student-level data files in the ERC contain the same key identifiers for students so that student records can be linked across files. Derived data files also will contain district information so that measures can be disaggregated by districts participating in the study.

d. Degree of accuracy needed for the purpose described in the justification

All estimates derived from both survey results and the analysis of ERC data will be unweighted means and proportions used solely for descriptive purposes. For the survey, none of the questions are designed to be combined into aggregate scales, and we will summarize findings by calculating the average response values for each survey item across all respondents. These estimates are only meant to summarize survey responses, and we will make no claim to generalize these findings to any wider population. By contrast, the ERC database contains data on the population of Texas students. As a result, we will not need to make any inference based on samples, and a benchmark for accuracy is irrelevant.

e. Unusual problems requiring specialized sampling procedures

There are no unusual problems requiring specialized sampling procedures.

f. Any use of periodic data collection cycles to reduce burden

This project will collect data one time for recruitment, followed by one set of surveys per targeted staff member and one round of interviews or focus groups for a subset of staff. Each data collection activity will be performed only once. The Texas ERC is a database housed at the University of Texas at Austin, and accessing those data places no burden on any member of the public.

B3. Methods to maximize responses

We expect that EAF coaches and district project leads will respond to the survey at high rates. They are likely invested in the implementation of the EAF and will want to share their thoughts on the program. The response rate will likely be lower for school staff, though we still expect a relatively high response rate from principals, assistant/vice principals, and counselors due to their position of leadership and their commitment to their schools’ reputation. In order to boost our response rate, we will both send personalized emails to staff who are slow to respond and ask EAF coaches and district project leads to reach out on our behalf to inform school staff of the importance of the survey. To encourage participation, we will offer incentives. For the EAF coach and district project lead surveys, we will inform potential participants that they will receive a $50 Amazon gift card if they complete the survey. For the school staff survey, we will inform potential participants that 20 survey participants will be selected at random to receive a $50 Amazon gift card. Because of this, we anticipate achieving the 85 percent response rate that is preferred by the National Center for Education Evaluation and Regional Assistance (IES, 2013).

Consistent with the National Center for Education Statistics (NCES) standards, we will conduct a nonresponse bias analysis when fewer than 85 percent of sampled members respond to the surveys. We will assess nonresponse bias by comparing survey respondents to all sampled members on a few variables for which there may be reason to suspect correlation with survey response, such as role, school grade level, school size, district size. Moreover, to assess potential bias due to which districts do and do not respond to the survey, , we will use ERC data to perform a descriptive analysis of district-level differences depending on participation in data collection. After survey data collection is complete, we will place all Texas school districts into four categories. Those categories are districts that are not participating in the EAF, districts participating in the EAF but that were not selected or did not choose to take part in data collection, districts that consented to take part in data collection but that are absent from one or more of our survey samples due to systematic nonresponse, and participating districts whose staff participated in the survey. We will then use ERC data to calculate the average student-level demographic characteristics and CCMR rates for each of these four groups. This comparison will provide context to the reader about the extent to which our results are generalizable.

B4. Test of procedures

Cognitive interviews will be conducted for each data collection instrument to ensure that the questions are intelligible, relevant, and conducive to obtaining the type of information for which they are designed. We will conduct no more than five cognitive interviews per collection instrument. The cognitive interviews will take place during the 2025 calendar year.

Research team members will select the participants and conduct the interviews. Participants will be selected as a convenience sample from within research team members’ existing networks. All interviewees will have some experience working in Texas public school systems at the school, district, or regional level. Each participant will be given a list of survey or interview questions and will be asked to respond to each question while voicing aloud their thoughts as they read and respond. The interviewer will take notes during this process. After the participant finishes answering the questions, the interviewer may ask follow-up questions as needed to better understand the participant’s perceptions of the questions. After the cognitive interviews are complete, research team members will compare notes and discuss potential improvements to the wording of the questions and the structure of the surveys and interviews. If modifications to the data collection instruments are substantive, the REL Southwest will submit to IES the revised instruments and a memo outlining the changes.

B5. Individuals consulted on design

The American Institutes for Research (AIR), ED’s contractor for the REL Southwest, is conducting this project. The principal investigators will be Holly Williams, PhD (512-773-4133), REL Southwest Texas Education Agency Tri-Agency Workforce Priorities partnership colead and senior researcher at AIR, and Jayce Warner, PhD (210-386-5288), senior research scientist at Gibson Consulting Group, AIR’s subcontractor for this REL Southwest project. Additional staff from the REL Southwest contributing to the study methods, instrument development, and data collection are Dr. Stacia Long (817-658-6369) and Dr. Brian Fitzpatrick (504-215-9100). Additional staff from the REL Southwest who were consulted on the statistical and design aspects of the project include Dr. Lynn Mellor (512-775-5770), Dr. Yinmei Wan (630-649-6500), and Dr. Amy Feygin (312-288-7600). The quality assurance reviewer, external to the REL Southwest, is Dr. Kristina Zeiser (202-403-6320).

References

Braun, V., & Clarke, V. (2006). Using thematic analysis in psychology. Qualitative Research in Psychology, 3(2), 77–101.

Charmaz, C. (2014). Constructing grounded theory (2nd ed.). Sage.

Hayes, A. F., & Krippendorff, K. (2007). Answering the call for a standard reliability measure for coding data. Communication Methods and Measures, 1(1), 77–89. https://doi.org/10.1080/19312450709336664

Institute of Education Sciences. (2013). NCEE guidance for REL study proposals, reports, and other products. U.S. Department of Education.

O’Connor, C., & Joffe, H. (2020). Intercoder reliability in qualitative research: Debates and practical guidelines. International Journal of Qualitative Methods, 19. https://doi.org/10.1177/1609406919899220

Saldaña, J. M. (2021). The coding manual for qualitative researchers (4th ed.). Sage.

Thornberg, R., & Charmaz, K. (2014). Grounded theory and theoretical coding. In The SAGE handbook of qualitative data analysis (5th ed., pp. 153–169). Sage.

| File Type | application/vnd.openxmlformats-officedocument.wordprocessingml.document |

| Author | Rich, Patrick |

| File Modified | 0000-00-00 |

| File Created | 2024-07-21 |

© 2026 OMB.report | Privacy Policy