Part B HSLS 2009 2nd Follow-up Main Study

Part B HSLS 2009 2nd Follow-up Main Study.docx

High School Longitudinal Study of 2009 (HSLS:09) Second Follow-up Main Study and 2018 Panel Maintenance

OMB: 1850-0852

High School Longitudinal Study of 2009 (HSLS:09) Second Follow-up Main Study

Supporting Statement

Part B

OMB# 1850-0852 v.17

National Center for Education Statistics

U.S. Department of Education

August 2015

Revised July 2016

Section Page

B. Collection of Information Employing Statistical Methods 1

B.1 Target Universe and Sampling Frames 1

B.2 Statistical Procedures for Collecting Information 1

B.2.b Institution Sample Phase I and Phase II 2

B.3 Methods for Maximizing Response Rates 2

B.3.b Interviewing Procedures 3

B.3.d Postsecondary Education Transcript Study and Financial Aid Record Collection 6

B.3.e HSLS:09 Postsecondary Education Transcript Study (PETS) Collection 7

B.3.f HSLS:09 Student Financial Aid Record Collection (FAR) 11

EXHIBITS

Number Page

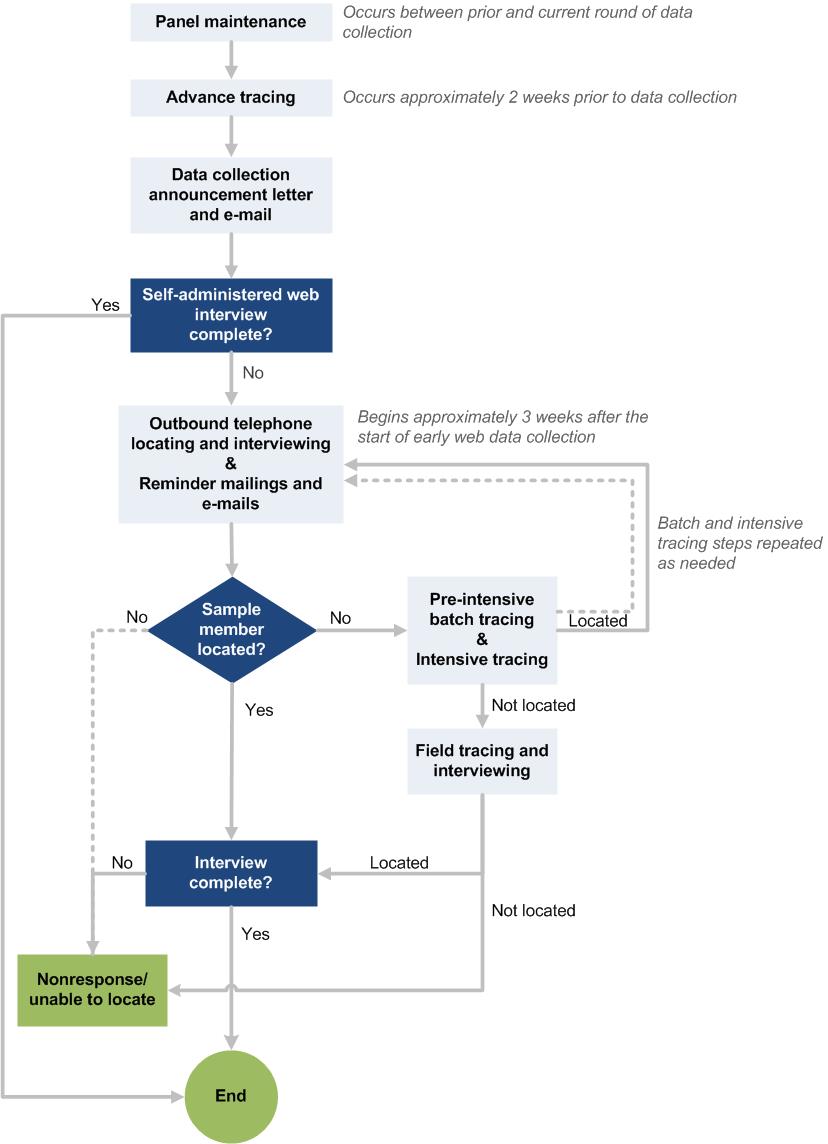

Exhibit B-1. Tracing and Locating Process 4

Exhibit B-3. Response Rates by Experimental Condition and Phase of Data Collection 13

Exhibit B-4. Simulated impact of responsive design interventions on sample representativeness 15

Exhibit B-5. Simulated effectiveness of responsive design interventions: incentive boost 16

Exhibit B-7. Calibration Sample Sizes, by Subgroup 17

Exhibit B-8. Data Collection Schedule and Phases. 18

Exhibit B-9. Main study baseline and incentive boost experiments 19

Exhibit B-10. Candidate Variables for the Main Study Response Likelihood Model 21

Exhibit B-11. Candidate Variables for the Main Study Bias Likelihood Model 21

B. Collection of Information Employing Statistical Methods

This section describes the target universe for the High School Longitudinal Study of 2009 (HSLS:09) and the sampling and statistical methodologies proposed for the second follow-up main study. This section also addresses suggested methods for maximizing response rates and for tests of procedures and methods, and introduces the statisticians and other technical staff responsible for design and administration of the study.

B.1 Target Universe and Sampling Frames

B.1.a Student Universe

The base-year target population for HSLS:09 consisted of 9th grade students in the fall of 2009 in public and private schools that include 9th and 11th grades. The target population for the second follow-up is this same 9th grade cohort in 2016. The target population for the postsecondary transcripts and student financial aid records collections is the subset of this same 9th grade cohort who had postsecondary education enrollment as of 2016.

B.1.b Institution Universe

The high school universe consisted of public and private schools in the United States with a 9th and 11th grade as of the fall of 2009.

The postsecondary institution universe is defined as all postsecondary institutions attended by HSLS:09 second follow-up sample members. These institutions will be identified via a two-phase approach consisting of self-reports in the first phase and postsecondary transcript review in the second phase. These institutions will constitute the sample from which postsecondary transcripts and student financial aid records will be collected.

To be eligible for the HSLS:09 second follow-up, an institution must meet the following criteria:

be attended, or have been attended, by one or more HSLS:09 second follow-up sample members;

offer an educational program designed for persons who had completed secondary education;

offer at least one academic, occupational, or vocational program of study lasting at least 3 months or 300 clock hours;

offer courses that are open to more than the employees or members of the company or group (e.g., union) that administered the institution; and

be located in the 50 states or the District of Columbia.

Institutions providing only vocational, recreational, or remedial courses or only in-house courses for their own employees will be excluded.

B.2 Statistical Procedures for Collecting Information

The HSLS:09 second follow-up data collection includes a student survey and collection of postsecondary transcripts and financial aid records from postsecondary institutions attended by the HSLS:09 student sample. The HSLS:09 postsecondary education transcript study (PETS) collection and financial aid record (FAR) collection will be divided into two phases.

Phase I. The set of student self-reported institutions will be asked to participate in the PETS transcript collection and FAR collection.

Phase II. Institutions with new student-institution pairs identified in the phase I (and phase II) collected transcripts will be contacted to provide transcript- and student financial aid data.

This section describes the student sample and the procedures for identifying institutions across phase I and phase II.

B.2.a Student Sample

Excluding deceased sample members, those who elected to withdraw from HSLS:09, and those who did not respond in either the base year or first follow-up, the same students who were sampled for the 2009 base-year data collection will be recruited to participate in the second follow-up main study’s 2016 data collection. The main study sample will include 25,184 non-deceased sample members. Of these, 23,316 will be fielded; the remaining cases have been identified as final refusals or as sample members who did not respond in either the base year or first follow-up (i.e., double nonrespondents) and will be classified as study nonrespondents.

B.2.b Institution Sample Phase I and Phase II

The total number of postsecondary institutions attended by sample members is estimated to be 3,800. Using eligibility and participation rates observed in the ELS:2002 postsecondary transcript data collection, an expected 98 percent of the estimated 3,800 schools will be eligible and approximately 3,230 postsecondary institutions (approximately 87 percent) will provide transcripts and financial aid record data. The institution sample for the first phase of transcript and financial aid record collection will comprise all eligible institutions reported by second follow-up sample members, including institutions reported in the 2013 Update1 as well as those self-reported in the second follow-up.

The second phase involves identifying new student-institution linkages from transcripts collected during phases I and II not otherwise previously reported by second follow-up sample members, and following up with the institutions so identified. Note that some institutions identified following transcript review will have previously been contacted during phase I or phase II and will be re-contacted in order to retrieve information for the newly linked students. Similarly, some institutions may be identified that were not included previously. These institutions will be contacted in order to retrieve transcript- and financial aid information for the associated students.

B.3 Methods for Maximizing Response Rates

B.3.a Locating

The response rate for the HSLS:09 data collection is a function of success in two basic activities: locating the sample members and gaining their cooperation. Many factors will affect the ability to successfully locate and survey sample members for HSLS:09. Among them are the availability, completeness, and accuracy of the locating data collected in the prior interviews. The locator database includes critical tracing information for nearly all sample members and their parents, including address information for their previous residences, telephone numbers, and e-mail addresses. This database allows interviewers and tracers to have ready access to all of the contact information available and to new leads developed through locating efforts. To achieve the desired locating and response rates, a multistage locating approach that capitalizes on available data for the HSLS:09 sample will be employed. The proposed locating approach includes the following activities:

Panel maintenance to maintain up-to-date contact information for sample members.2

Advance tracing includes batch database searches, contact information updates, and intensive tracing that will be conducted prior to the start of data collection.

Prompting sample members with mail and e-mail contacts will maintain regular contact with sample members and encourage them to complete the survey.

Telephone locating and interviewing includes calling all available telephone numbers and following up on leads provided by parents and other contacts. Interviewers will take full advantage of the contacting information available for parents and other contacts (for this cohort, parent contact information is often more reliable than sample member contact information).

Pre-intensive batch tracing consists of the Premium Phone searches that will be conducted between the telephone locating and interviewing stage and the intensive tracing stage.

Intensive tracing consists of tracers checking all telephone numbers and conducting credit bureau database searches after all current telephone numbers have been exhausted.

Field tracing and interviewing will be employed during the main study to pursue targeted cases (e.g., high school non-completers) that were unable to be reached and interviewed by other means. This step will be particularly valuable for HSLS:09, considering that there is less contacting information available for HSLS:09 sample members (e.g., SSN coverage is less than 60% for the HSLS:09 cohort) than is typically available for other NCES studies of young adult populations (such as NPSAS or B&B).

Other locating activities will take place as needed and may include additional tracing resources (e.g., matches to Department of Education financial aid data sources) that are not part of the previous stages.

The methods chosen to locate sample members are based on the successful approaches employed in earlier rounds of this study as well as experience gained from other recent NCES longitudinal cohort studies. The tracing approach is designed to locate the maximum number of sample members with the least expense. The most cost-effective steps will be taken first so as to minimize the number of cases requiring more costly intensive tracing efforts.

Exhibit B-1 demonstrates the steps in the tracing and locating process, including contacting sample members. The contacting approach will begin with panel maintenance (approved in March 2015, as part of OMB #1850-0852 v.15), in which sample members will be asked to update their contact information in advance of data collection. As part of these activities, intensive tracing will be conducted for a subset of cases (approximately 5,000) for whom address information is unknown or is known to be obsolete. At the start of data collection, we will send an announcement letter and e-mail inviting sample members to complete the interview. With the sample members who do not respond during the early self-administered web period, we will stay in regular contact by following up with periodic reminder mailings and e-mails. This approach draws upon recent NCES surveys of young adults, such as ELS:2002, NPSAS, BPS, and B&B, in which sending frequent reminders was shown to increase response without negatively impacting rates of refusal. The reminders will generally be spaced one to three weeks apart, varying the day of delivery. The exact timing of reminders will be influenced by ongoing data collection results, such as the characteristics of nonresponding sample members, the specific questions or concerns raised by sample members, patterns of sample member response, and the timing of targeted interventions. Sample contacting materials are provided in appendix D.

B.3.b Interviewing Procedures

Interviewer training. As in the second follow-up field test, contractor staff with experience in training interviewers will prepare the HSLS:09 second follow-up main study training program for telephone and field interviewers. In addition to administering the actual survey, the training protocol will prepare interviewers to locate sample members and gain their cooperation. The training manual will provide detailed coverage of the background and purpose of the study, sample design, the survey instrument, and procedures for conducting interviews. The manuals will also serve as a reference for telephone and field staff throughout data collection. Training staff will also prepare training exercises, mock interviews (specially constructed to highlight the potential of definitional and response problems), and other training aids. At the conclusion of training, all interviewers must meet certification requirements by successfully completing a certification interview. This evaluation consists of a full-length interview with project staff

Exhibit B-1. Tracing and Locating Process

observing and evaluating interviewers, as well as an oral evaluation of interviewers’ knowledge of the study’s Frequently Asked Questions. Interviewers will be expected to knowledgably and extemporaneously respond to sample members’ questions about the study, thereby increasing their chances of gaining cooperation.

Interviewing. Interviews will be conducted using a single web-based survey instrument for all modes of data collection—self-administered (via computer or mobile device) or interviewer-administered (via telephone and field interviewers). Data collection activities will be managed through the Case Management System (CMS), which is equipped with the following capabilities:

Complete record of locating information and histories of locating and contacting efforts for each case;

Complete reporting capabilities, including default reports on the aggregate status of cases and capabilities for developing custom reports;

Automated scheduling module, which provides highly efficient case assignment and delivery functions, reduces supervisory and clerical time, and improves execution on the part of interviewers and supervisors by automatically monitoring appointments and callbacks. The scheduler delivers cases to telephone interviewers and incorporates the following efficiency features:

automatic delivery of appointment and call-back cases at specified times;

sorting of non-appointment cases according to parameters and priorities set by project staff;

complete records of calls and tracking of all previous outcomes; and

tracking of problem cases for supervisor action or supervisor review.

In the main study, field interviews will also be conducted via Computer Assisted Personal Interviewing (CAPI) to increase participation among subgroups of interest. Field interviewing can be an effective approach to locating and gaining cooperation from sample members who have been classified as high school non-completers or who have not enrolled in postsecondary education – both typically more difficult to locate and interview than other cases. Field interviewing can also be effective in increasing response among other potentially underrepresented groups as identified by responsive design modeling. As part of the responsive design approach, field cases will be identified and field interviewers will be assigned in geographic clusters to ensure the most efficient allocation of resources. Field interviewers will be equipped with a full record of the case history and will draw upon their extensive training and experience to decide how best to proceed with each case.

Refusal aversion and conversion. Recognizing and avoiding refusals is important to maximize the response rate. Supervisors will monitor interviewers intensively during the early weeks of data collection and provide retraining as necessary. In addition, supervisors will review daily interviewer production reports to identify and retrain any interviewers with unacceptable numbers of refusals or other problems. After encountering a refusal, comments are entered into the CMS record that include all pertinent data regarding the refusal situation, including any unusual circumstances and any reasons given by the sample member for refusing. Supervisors review these comments to determine what action to take with each refusal; no refusal or partial interview will be coded as final without supervisory review and approval.

If follow-up to an initial refusal is not appropriate (e.g., there are extenuating circumstances, such as illness or the sample member firmly requested no further contact), the case will be coded as final and no additional contact will be made. If the case appears to be a “soft” refusal (i.e., an initial refusal that warrants conversion efforts – such as expressions of too little time or lack of interest or a telephone hang-up without comment), follow-up will be assigned to an interviewer other than the one who received the initial refusal. The case will be assigned to a member of a special refusal conversion team made up of interviewers who have proven especially skilled at converting refusals. Refusal conversion efforts will be delayed until at least 1 week after the initial refusal. Attempts at refusal conversion will not be made with individuals who become verbally aggressive or who threaten to take legal or other action. Project staff sometimes receive refusals via email or calls to the project toll-free line. These refusals are included in the CATI record of events and coded as final when appropriate.

B.3.c Quality Control

Interviewer monitoring will be conducted using the Quality Evaluation System (QUEST) as a quality control measure throughout the main study data collection. QUEST is a system developed by a team of RTI researchers, methodologists, and operations staff focused on developing standardized monitoring protocols, performance measures, evaluation criteria, reports, and appropriate system security controls. It is a comprehensive performance quality monitoring system that includes standard systems and procedures for all phases of the interview process, including obtaining respondent consent for recording, interviewing respondents who refuse consent for recording, and monitoring refusals at the interviewer level; sampling of completed interviews by interviewer and evaluating interviewer performance; maintaining an online database of interviewer performance data; and addressing potential problems through supplemental training. These features and procedures are based on “best practices” identified in the course of conducting many survey research projects.

As in previous studies, QUEST will be used to monitor approximately 7 percent of all completed interviews plus an additional 2.5 percent of recorded refusals. In addition, quality supervisors will conduct silent monitoring for 2.5 to 3 percent of budgeted interviewer hours on the project. This will allow real-time evaluation of a variety of call outcomes and interviewer-respondent interactions. Recorded interviews will be reviewed by supervisors for key elements such as professionalism and presentation; case management and refusal conversion; and reading, probing, and keying skills. Any problems observed during the interview will be documented on problem reports generated by QUEST. Feedback will be provided to interviewers and patterns of poor performance (e.g., failure to use active-listening interviewing techniques, failure to probe, etc.) will be carefully monitored and noted in the feedback form that will be provided to the interviewers. As needed, interviewers will receive supplemental training in areas where deficiencies are noted. In all cases, sample members will be notified that the interview may be monitored by supervisory staff.

B.3.d Postsecondary Education Transcript Study and Financial Aid Record Collection

The success of the HSLS:09 PETS and FAR collections is fully dependent on the active participation of sampled institutions. The cooperation of an institution’s coordinator is essential as well, and helps to encourage the timely completion of the data collection. Telephone contact between the project team and institution coordinators provides an opportunity to emphasize the importance of the study and to address any concerns about participation.

Proven procedures. HSLS:09 procedures for working with institutions will be developed from those used successfully in other studies with transcript collections such as the ELS:2002 Postsecondary Education Transcript Study and the 2009 Postsecondary Education Transcript Study (PETS:09) that combined transcript collections for the B&B:08/09 and BPS:04/09 samples, as well as studies involving student records data collection from institutions, specifically, ELS:2002 Financial Aid Feasibility Study (FAFS), NPSAS:08, NPSAS:12, and NPSAS:16. HSLS:09 will use an institution control system (ICS) similar to the system used for ELS:2002 PETS to maintain relevant information about the institutions attended by each HSLS:09 cohort member. Institution contact information obtained from the Integrated Postsecondary Education Data System (IPEDS) will be loaded into the ICS, then used for all mailings and confirmed during a call to each institution verifying the name of the registrar and financial aid director (or other appropriate contact), address information, telephone and facsimile numbers, and email addresses. This verification call will help to ensure that transcript and student records request materials are properly routed, reviewed, and processed.

Endorsements. In past studies, the specific endorsement of relevant associations and organizations has been extremely useful in persuading institutions to cooperate. Endorsements from 16 professional associations were secured for ELS:2002 PETS and FAFS. Appropriate associations to request endorsement for HSLS:09 PETS and FAR collections will be contacted as well; a list of potential endorsing associations and organizations is provided in appendix F.

Minimizing burden. Different options for collecting data for sampled students are offered. Institution staff are invited to select the methodology of greatest convenience to the institution. The various options available to institutions for providing the data are discussed later in this section.

Because several NCES studies with postsecondary collections (e.g., HSLS:09, BPS, NPSAS) will be requesting transcripts and student records moving forward, NCES intends to streamline requests to postsecondary institutional records staff by minimizing the number of contacts each institution receives and thereby decrease burden. The goal is to combine the requests for as many studies as possible that have ongoing transcripts and/or student records collections. In 2017, HSLS:09 Second Follow-up and BPS:12/17 will have ongoing transcripts and student records collections, and so NCES combined communication materials for the two studies. Relevant contacts (e.g., Institutional Records or IR staff) will get one letter informing them of an upcoming request for transcripts and student records, rather than two. Following this initial contact, as HSLS:09 and BPS collect transcripts and code that information, more postsecondary institutions attended by sample students may be learned of, thereby increasing the set of institutions to contact. In such cases, NCES will combine as many of the newly discovered cases into as few requests as possible. For example, if a sample member did not respond to the Second Follow-up but their postsecondary institution attended was known as of 2013, upon receipt of the 2013 institution’s transcript, another institutional attendance between 2013 and 2016 may be discovered and thus be added to the contact set. Rather than sending a request for another transcript for one student to the new institution’s IR staff person immediately, the institutional contacting staff will hold this request until additional new cases have been discovered. A request will be placed later in the collection window to ask for multiple student transcripts at the same time. Because adding individual student records to one request is simpler than initiating multiple new requests, it will reduce burden on the institutional record staff.

Another strategy that has been successful in increasing the efficiency of institution data collections, encouraging participation, and minimizing burden is soliciting support at a system-wide level rather than contacting each institution within the system separately. A timely contact, together with enhanced institution contact information verification procedures, is likely to reduce the number of remail requests, and minimize delay caused by misrouted requests.

B.3.e HSLS:09 Postsecondary Education Transcript Study (PETS) Collection

NCES recently (2013-2014) completed ELS:2002 PETS, a collection of approximately 20,600 postsecondary transcripts, and previously completed PETS:09, a collection of approximately 45,000 postsecondary transcripts for the BPS:04/09 and B&B:08/09 samples. The same processes and systems found to be effective in those studies will be adapted for use for HSLS:09. They are described below.

Data request materials and prompting. Transcript and financial aid records data will be requested for sampled students from all institutions attended since high school. The descriptive materials sent to institutions to request data will be clear, concise, and informative about the purpose of the study and the nature of subsequent requests. The package of materials sent to the institution coordinators, provided in appendix F, will contain the following:

• A letter from RTI providing an introduction to the HSLS:09 PETS and FAR collections;

• An introductory letter from NCES on NCES letterhead;

• Letter(s) of endorsement from supporting organizations/agencies (e.g., the American Association of Collegiate Registrars and Admission Officers);

• A list of other endorsing agencies;

• Information regarding how to log on to the secure NCES postsecondary data portal website and access the list of students for which transcripts and financial aid information are requested as well as a form in which they can request reimbursement of expenses incurred with the request (e.g., transcript processing fees); and

• Descriptions of and instructions for the various methods of providing transcripts and financial aid records data.

The institutions will be asked, in the same requests, to participate in the PETS and FAR collections. If separate staff are identified to provide different types of data, the contact materials will be provided to those staff members as needed. Follow-up contacts will occur after the initial mailing to ensure receipt of the package and answer any questions about the study, as applicable.

Transcript data submission options. Several methods will be used for obtaining the transcript data including: (1) institution staff uploading electronic transcripts for sampled students to the secure NCES postsecondary data portal website; (2) institution staff sending electronic transcripts for sampled students by secure File Transfer Protocol; (3) institution staff sending electronic transcripts as encrypted attachments via email; (4) RTI requesting/collecting electronic transcripts via a dedicated server at National Student Clearinghouse for institutions that already use this method; (5) institution staff sending electronic transcripts via eSCRIP-SAFE™, in which institutions send data to the eSCRIP-SAFE™ server by secure internet connection where they can be downloaded only by a designated user; (6) institution staff transmitting transcripts via a secure electronic fax after a test submission of nonsensitive data confirms that the institution has the correct fax number; and as a last resort, (7) sending redacted transcripts via Federal Express. Each method is described below. (Options for providing financial aid records data are described in section B.3.f.)

A complete transcript from the institution will be requested as well as the complete transcripts from transfer institutions that the students attended, as applicable. A Transcript Control System (TCS) will track receipt of institution materials and student transcripts for HSLS:09 PETS. The TCS will track the status of each catalog and transcript request, from initial mailout of the requests through follow-up and final receipt.

Uploading electronic transcripts to the secure NCES postsecondary data portal website. Goals for HSLS:09 PETS include reducing the data collection burden on institutions, expediting data delivery, improving data quality, and ensuring data security. Because the open internet is not conducive to transmitting confidential data, any internet-based data collection effort necessarily raises the question of security. However, the latest technology systems will be incorporated into the web application to ensure strict adherence to NCES confidentiality guidelines. The web server will include a Secure-Sockets Layer (SSL) Certificate, and will be configured to force encrypted data transmission over the Internet. All of the data entry modules on this site are password protected, requiring the user to log in to the site before accessing confidential data. The system automatically logs the user out after 20 minutes of inactivity.

Files uploaded to the secure website will be stored in a secure project folder that is only accessible and visible to authorized staff members.

Institution staff sending electronic transcripts by secure File Transfer Protocol. FTPS (also called FTP-SSL) uses the FTP protocol on top of SSL or Transcript Layer Security (TLS). When using FTPS, the control session is always encrypted. The data session can optionally be encrypted if the file has not been pre-encrypted. Files transmitted via FTPS will be placed in a secure project folder that is only accessible and visible to authorized staff members.

Institution staff sending electronic transcripts as encrypted attachments via email. RTI will provide guidelines on encryption and creating strong passwords. Encrypted electronic files sent via email to a secure email folder will only be accessible to a limited set of authorized staff members on the project team. These files will then be copied to a project folder that is only accessible and visible to these same staff members.

Institution staff sending electronic transcripts via eSCRIP-SAFE™. This method involves the institution sending data via a customized print driver which connects the student information system to the eSCRIP-SAFE™ server by secure internet connection. RTI, as the designated recipient, can then download the data after entering a password. The files are deleted from the server 24 hours after being accessed. The transmission between sending institutions and the eSCRIP-SAFE™ server is protected by Secure Socket Layer (SSL) connections using 128-bit key ciphers. Remote access to the eSCRIP-SAFE™ server via the Web interface is likewise protected via 128-bit SSL. Downloaded files will be moved to a secure project folder that is only accessible and visible to authorized staff members.

Institution staff transmitting transcripts via a secure electronic fax (e-fax). It is expected that few institutions will ask to provide hardcopy transcripts. In such cases, institutions will be encouraged to use one of the secure electronic methods of transmission. If that is not possible, faxed transcripts will be accepted. Although fax equipment and software does facilitate rapid transmission of information, this same equipment and software opens up the possibility that information could be misdirected or intercepted by individuals to whom access is not intended or authorized. To safeguard against this, as much as is practical, the protocol will only allow for transcripts to be faxed to an electronic fax machine and only if institutions cannot use one of the other options. To ensure the fax transmission is sent to the appropriate destination, a test run with non-sensitive data will be required prior to submitting the transcripts to eliminate errors in transmission from misdialing. Institutions will be given a fax cover page that includes a confidentiality statement to use when transmitting individually identifiable information.

Transcript data received via e-fax are stored as electronic files on the e-fax server, which is housed in a secured data center at RTI. These files will be copied to a project folder that is only accessible and visible to authorized staff members.

Institution staff sending transcripts via Federal Express. When institutions ask to provide hardcopy transcripts, they will be encouraged to use one of the secure electronic methods of transmission or fax. If that is not possible, transcripts sent via Federal Express will be accepted. Before sending, institution staff will be instructed to redact any personally identifiable information from the transcript including student name, address, data of birth, and Social Security Number (if present). Paper transcripts will be scanned and stored as electronic files. These files will be stored in a project folder that is only accessible and visible to project staff members. The original paper transcripts will be shredded.

Collecting electronic transcripts via a dedicated server at National Student Clearinghouse. Transcripts will also be requested electronically via a dedicated server (formerly at the University of Texas at Austin now hosted by National Student Clearinghouse) for institutions that currently use this method. Approximately 200 institutions are currently registered to send and receive academic transcripts in standardized electronic formats via the SPEEDE dedicated server. The server supports Electronic Data Interchange (EDI) and XML formats. Additional institutions are in the test phase with the server, which means that they are preparing for and testing using the server but not currently using it to send data. The SPEEDE server supports the following methods of securely transmitting transcripts:

• email as MIME attachment using PGP encryption;

• regular FTP using PGP encryption;

• Secure FTP (SFTP over ssh) and straight SFTP; and

• FTPS (FTP over SSL/TLS).

Files collected via this dedicated server will be copied to a secure project folder that is only accessible and visible to authorized staff members. The same access restrictions and storage protocol will be followed for these files as described above for files uploaded to the NCES postsecondary data portal website.

FERPA active consent exemption. Active student consent for the release of transcripts will not be required for HSLS:09 PETS. For certain agency purposes, the Family Educational Rights and Privacy Act of 1974 (FERPA) (34 CFR Part 99) permits institutions to release student data to the Secretary of Education and his authorized agents without consent. In compliance with FERPA, a notation will be made in the student record that the transcript has been collected for use only for statistical purposes in the HSLS:09 longitudinal study.

Quality control and initial processing. As part of quality control procedures, the importance of collecting complete transcript information for all sampled students will be emphasized to registrars. Transcripts will be reviewed for completeness. Institutional contactors will contact the institutions to prompt for missing data and to resolve any problems or inconsistencies.

Transcripts received in hardcopy form will be subject to a quick review prior to recording their receipt. Receipt control clerks will check transcripts for completeness and review transmittal documents to ensure that transcripts have been returned for each of the specified sample members. The disposition code for transcripts received will be entered into the TCS. Course catalogs will also be reviewed and their disposition status updated in the system in cases where this information is necessary and not available through CollegeSource Online. Hardcopy course catalogs will be sorted and stored in a secure facility at RTI, organized by institution. The procedures for electronic transcripts will be similar to those for hardcopy documents—receipt control personnel, assisted by programming staff, will verify that the transcript was received for the given requested sample member, record the information in the receipt control system, and check to make sure that a readable, complete electronic transcript has been received.

The initial transcript check-in procedure is designed to efficiently log the receipt of materials into the TCS as they are received each day. The presence of an electronic catalog (obtained from CollegeSource Online) will be confirmed during the verification process for each institution and noted in the TCS. The remaining catalogs will be requested from the institutions directly and will be logged in the TCS as they are received. Transcripts and supplementary materials received from institutions (including course catalogs) will be inventoried, assigned unique identifiers based on the IPEDS ID, reviewed for problems, and logged into the TCS.

Data processing staff will be responsible for (1) sorting transcripts into alphabetical order to facilitate accurate review and receipt; (2) assigning the correct ID number to each document returned and affixing a transcript ID label to each; (3) reviewing the materials to identify missing, incomplete, or indecipherable transcripts; and (4) assigning appropriate TCS problem codes to each of the missing and problem transcripts plus providing detailed notes about each problem to facilitate follow-up by Institutional Contactors and other project staff. Project staff will use daily monitoring reports to review the transcript problems and to identify approaches to solving the problems. Web-based collection will allow timely quality control, as RTI staff will be able to monitor data quality for participating institutions closely and on a regular basis. When institutions call for technical or substantive support, the institution’s data will be queried in order to communicate with the institution much more effectively regarding any problems. Transcript data will be destroyed or shredded after the transcripts are keyed, coded, and quality checked.

Transcript keying and coding. Once student transcripts and course catalogs are received, and missing information is collected, keying and coding of transcripts (including courses taken) will occur. As part of PETS:09, the course classification structure used on NELS:88 has been updated and enhanced. The result, the 2010 College Course Map3, is a hybrid PETS coding taxonomy that makes it easier for Keyer-Coders (KCs) to select an appropriate code for the courses they identify on transcripts. This taxonomy was used for coding courses for ELS:2002 PETS and will be used for HSLS:09 PETS. KCs will have full access to all transcript-related documents including course catalogs or other course listings provided. All transcript-related documents will be thoroughly reviewed before data are abstracted from them.

Transcript keying and coding quality control. A comprehensive supervision and quality control plan will be implemented during transcript keying and coding. At least one supervisor will be onsite at all times to manage the effort and simultaneously perform QC checks and problem resolution. Verifications of transcript data keying and coding at the student level will be performed. Any errors will be recorded and corrected as needed. Once the transcripts for each institution are keyed and coded, transcript course coding at the institution level will be reviewed by expert coders to ensure that (1) coding taxonomies have been applied consistently and data elements of interest have been coded properly within institution; (2) program information has been coded consistently according to the program area and sequence level indicators in course titles; (3) records of sample members who attended multiple institutions do not have duplicate entries for credits that transferred from one institution to another; and (4) additional information has been noted and coded properly.

B.3.f HSLS:09 Student Financial Aid Record Collection (FAR)

Several options will be offered to institutions for providing the financial aid records for students, similar to those used for ELS:2002 FAFS and NPSAS:16; institution coordinators will be invited to select the methodology that is least burdensome and most convenient for the institution. The options for providing financial aid record data are described below.

Student records obtained via a web-based data entry interface. The web-based data entry interface allows the coordinator to enter data by student, by year.

Student records obtained by completing an Excel workbook. An Excel workbook will be created for each institution and will be preloaded with each sampled student’s ID, name, date of birth, and SSN (if available). To facilitate simultaneous data entry by different offices within the institution, the workbook contains a separate worksheet for each of the following topic areas: Student Information, Financial Aid, Enrollment, and Budget. The user will download the Excel worksheet from the secure NCES postsecondary data portal website, enter the data, and then upload the data to the website. Validation checks will occur both within Excel as data are entered and when the data are uploaded via the website. Data will be imported into the web application such that institution staff can check their data for quality control purposes.

Student records obtained by uploading CSV (comma separated values) files. Institutions with the means to export data from their internal database systems to a flat file may use this method of supplying financial aid records. Institutions that select this method will be provided with detailed import specifications, and all data uploading will occur through the secure NCES postsecondary data portal website. Like the Excel workbook option, data will be imported into the web application such that institution staff can check their data before finalizing.

Support and follow-up for student financial aid records collection. Institution coordinators will receive a guide that provides instructions for accessing and using the website. In conjunction with the transcript collection, RTI institution contacting staff will notify institutions that the student financial aid records data collection has begun and will follow up (by telephone, mail, or e-mail) as needed. Project staff will be available by telephone or by email to provide assistance if institution staff have questions or encounter problems.

B.4 Tests of Procedures and Methods

The design of the HSLS:09 main study—in particular, its use of responsive design (an approach in which nonresponding sample members predicted to be most likely to contribute to nonresponse bias in different estimates of interest are identified at multiple points during data collection and targeted with changes in protocol to increase their participation and to reduce nonresponse bias)—expands on data collection experiments designed for a series of NCES studies, including the HSLS:09 second follow-up field test and 2013 Update. The design proposed for the HSLS:09 second follow-up main study builds upon what has been learned in HSLS:09 and other NCES studies, most recently BPS:12/14. The following sections describe the design that was implemented in the HSLS:09 second follow-up field test, present results from the field test, and recommend a responsive design plan for the main study implementation.

B.4.a Field Test Experimental Design

Overview. The HSLS:09 2013 Update demonstrated that the models used to identify sample members who are underrepresented with regard to the key survey variables were successful. Likewise, the 2013 Update interventions used (e.g., prepaid incentives, increasing incentive offers) among the cases targeted based on the model results also seemed effective in encouraging cooperation among targeted cases, although the interventions were not evaluated experimentally. In order to adequately assess the effectiveness of specific interventions, a randomized design was proposed for the HSLS:09 second follow-up field test to allow for experimental evaluation of interventions. Due to the small size of the field test sample and because some of the interventions were under consideration for all main study cases (not solely cases targeted by responsive design methods), the interventions were implemented based on random assignment to treatment conditions rather than from a predetermined threshold from the responsive design model.

Experiments. The assessment of different interventions in a full factorial experimental design from the field test was conducted to inform the most effective and cost-efficient treatments to be used in the main study. In the second follow-up field test, four interventions were tested experimentally:

Timing of prepaid incentive (early vs. late) – A prepaid incentive of $5 with the start of data collection mailing vs. a prepaid incentive of $5 after 6 weeks of data collection (after 3 weeks of web-only data collection and 3 weeks of outbound telephone calls);

Baseline incentive offer amount ($0 vs. $15) -- A $15 promised incentive vs. no promised incentive;

Incentive “boost” offer amount ($0 vs. $15 vs. $30) -- An additional incentive offer to supplement the initial offer in one of two values (or no boost) approximately 8 weeks into data collection; and

Additional “boost” vs. abbreviated interview -- All remaining nonrespondents received either an additional $25 incentive offer or an abbreviated interview offer (approximately 12 days before the end of data collection).

The schedule of interventions is outlined in exhibit B-2. For ease of presentation, the first two interventions are presented as four distinct groups. A full factorial experimental design (2*2*3*2) was implemented as some interventions could interact; for example, the $15 baseline offered incentive could have been most effective when coupled with a prepaid incentive. The 1,100 sample cases were allocated equally across the treatment conditions.

Exhibit B-2. Schedule of interventions in the HSLS:09 second follow-up field test

Group Assignment |

Group A |

Group B |

Group C |

Group D |

||||||||

Baseline Incentive and Timing of $5 Prepaid Incentive |

No baseline incentive |

Baseline incentive amount: $15 |

No baseline incentive |

Baseline incentive amount: $15 |

||||||||

Timing of $5 prepaid incentive: week 6 |

Timing of $5 prepaid incentive: week 6 |

Timing of $5 prepaid incentive: with start of data collection mailing |

Timing of $5 prepaid incentive: with start of data collection mailing |

|||||||||

Incentive boost (in addition to baseline promised incentive) (Week 9) |

No boost

|

$15

|

$30

|

No boost

|

$15

|

$30

|

No boost

|

$15

|

$30

|

No boost

|

$15

|

$30

|

Final Treatment -- all remaining nonrespondents |

||||||||||||

(Weeks 13 and 14) |

Random assignment of nonrespondents to either $25 additional incentive or an abbreviated interview |

|||||||||||

Note: We assume that a main effect of five percentage points (i.e., 45% vs. 50%) in response rates due to offering a baseline incentive or the timing of the prepaid incentive could be detected at alpha=.05 with power=.84 (one-tailed test). For the incentive boost, it should be possible to detect a difference of five percentage points (i.e., 59% vs. 64%) at alpha=.05 with power=.47, between two of the three conditions (one-tailed test).

Exhibit B-3. Response Rates by Experimental Condition and Phase of Data Collection

Group Assignment |

Total |

Group A |

Group B |

Group C |

Group D |

||||||||||||

Baseline Contingent Incentive and Timing of $5 Prepaid Incentive |

|

No baseline contingent incentive |

Baseline contingent incentive amount: $15 |

No baseline contingent incentive |

Baseline contingent incentive amount: $15 |

||||||||||||

|

Timing

of $5 prepaid incentive: |

Timing

of $5 prepaid incentive: |

Timing of $5 prepaid incentive: with start of data collection mailing |

Timing of $5 prepaid incentive: with start of data collection mailing |

|||||||||||||

Overall Response Rate |

50.5 (n=1,100) |

45.5 (n=275) |

52.4 (n=275) |

47.6 (n=275) |

56.4 (n=275) |

||||||||||||

Within phase response rates (within phase completions / remaining sample) |

|||||||||||||||||

4/15/2015 - 5/3/2015 (Phase 1) |

12.2 (n=1,100) |

6.9 (n=275) |

15.6 (n=275) |

8.7 (n=275) |

17.8 (n=275) |

||||||||||||

5/4/2015 - 5/25/2015 (Phase 2) |

12.5 (n=966) |

9.4 (n=256) |

14.2 (n=232) |

12.0 (n=251) |

15.0 (n=227) |

||||||||||||

5/26/2015 - 6/7/2015 (Phase 3) |

9.5 (n=845) |

9.5 (n=232 ) |

10.1 (n=199 ) |

7.7 (n=221 ) |

10.9 (n=193 ) |

||||||||||||

6/8/2015 - 7/5/2015 (Phase 4) |

17.0 (n=765) |

17.6 (n=210 ) |

16.8 (n=179) |

12.7 (n=204 ) |

21.5 (n=172 ) |

||||||||||||

7/6/2015 - 7/17/2015 (Phase 5) |

14.2 (n=635) |

13.3 (n=173 ) |

12.1 (n=149 ) |

19.1 (n=178 ) |

11.1 (n= 135) |

||||||||||||

Phase 4 response rates by treatment |

|||||||||||||||||

Contingent Incentive boost (in addition to baseline promised incentive) (Phase 4 – by boost amount) |

17.0 (765) |

Cum. resp. rate before phase 4 |

No boost

|

$15

|

$30

|

Cum. resp. rate before phase 4 |

No boost

|

$15

|

$30

|

Cum. resp. rate before phase 4 |

No boost

|

$15

|

$30

|

Cum. resp. rate before phase 4 |

No boost

|

$15

|

$30

|

23.6 (n=275) |

11.4 (n= 70) |

23.2 (n=69) |

18.3 (n=71 ) |

34.9 (n=275) |

11.9 (n=59) |

23.7 (n=59) |

14.8 (n=61) |

25.8 (n=275) |

4.5 (n=67) |

14.7 (n=68) |

18.8 (n=69) |

37.5 (n=275) |

21.4 (n=56) |

27.6 (n=58) |

15.5 (n=58) |

||

Phase 5 response rates by treatment |

|||||||||||||||||

(Phase 5 – abbreviated vs. additional $25) |

14.2 (635) |

Cum. resp. rate before phase 5 |

Abbrev question |

Add $25 |

Cum. resp. rate before phase 5 |

Abbrev question |

Add $25 |

Cum. resp. rate before phase 5 |

Abbrev question |

Add $25 |

Cum. resp. rate before phase 5 |

Abbrev question |

Add $25 |

||||

37.1 (n=275) |

10.3 (n=87) |

16.3 (n=86) |

45.8 (n=275) |

8.0 (n=75) |

16.2 (n=74) |

35.3 (n=275) |

17.0 (n=88) |

21.1 (n=90) |

50.9 (n=275) |

4.5 (n=67) |

17.6 (n=68) |

||||||

Note: Results do not include partial respondents.

B.4.b Field Test Experimental Results

Summary. Exhibit B-3 presents the response rates by experimental conditions and phase of data collection. The results suggest 1) a substantial effect of baseline promised incentive that carries through the end of data collection; 2) no effect of timing of the prepaid incentive (potentially making the prepaid incentive unnecessary, if a promised incentive is offered at the onset); and 3) offering of an additional incentive at the end of data collection increases response rates more than offering an abbreviated interview.

Significance testing. Formal significance tests confirmed the above results. We found a significant effect of $15 promised incentive overall (groups AC vs. BD; Chi-square=6.72, p=0.009). We also found a significant effect of $15 promised incentive when comparing the no incentive/early prepaid group to the $15 promised/early prepaid group (C vs. D; Chi-square=4.20, p=0.04). However, there was no significant difference when comparing the no incentive/late prepaid group to the $15 promised/late prepaid group (A vs. B; Chi-square =2.63, p=0.11).

We found no effect of early vs. late prepaid incentive when comparing the no incentive/late prepaid vs. no incentive/early prepaid groups (A vs. C) and $15 promised/late prepaid vs. $15 promised/early prepaid (B vs. D) (Chi-square =0.26, p=0.61 and Chi-square=0.89, p=0.35 respectively). Prepaid incentive timing did not seem to make a difference when pooling cases across $15 promised vs. no baseline promised incentive cases (groups AB vs. CD; - Chi-square=1.05, p=0.31).

For the incentive boost experiment, we found significant effect of any boost incentive compared with no incentive boost (Chi-square=6.90, p=0.009) and a $15 incentive boost compared with no incentive boost (Chi-square=9.22, p=0.002). The differences between $15 and $30 incentive boost as well as $0 vs. $30 were not significant (Chi-square=2.09, p=0.15 and Chi-square=2.67, p=0.10 respectively). We found a significant effect of the boost incentive among cases that were not offered $15 promised at baseline (Chi-square=7.03, p=0.008 for the $15 condition and Chi-square=6.65, p=0.01 for the $30 condition); the difference between $15 and $30 boost was not significant (Chi-square=0.01, p=0.93). The boost incentive did not make a difference for cases that were initially offered the $15 promised incentive at baseline (Chi-square=2.90, p=0.09 for the $15 condition and Chi-square=0.09, p=0.77 for the $30 condition).

Finally, for weeks 13 and 14 we found that the additional $25 incentive boost performed significantly better than the abbreviated survey overall (Chi-square=7.37, p=0.007) and for cases that were initially offered the $15 promised incentive at baseline and $15 or $30 boost incentive (Chi-square=7.71, p=0.005). However, no significant differences were found between the additional $25 incentive and the abbreviated interview for cases that were not offered $15 at baseline and were not offered the first incentive boost (Chi-square=1.89, p=0.17). Similarly, no significant differences between the $25 incentive and the abbreviated interview were found for cases that were not offered the initial $15 promised, but were offered a $15 or $30 boost incentive (Chi-square=0.43, p=0.51).

B.4.c Field Test Responsive Design Simulations

We will employ responsive design methods in the main study to produce a responding sample that most closely resembles the population (i.e., fall 2009 9th-graders) while allocating resources most efficiently. We will do so by selectively targeting for special interventions a subset of cases that might contribute most to potential nonresponse bias if they do not respond.

In preparation for the main study, we have analyzed the field test results by simulating what may have happened if we targeted specific cases based on a responsive design model, rather than random assignment to experiment conditions. To identify cases for targeted treatments, we refined the model that was developed and evaluated in the 2013 Update by making use of data obtained as part of the field test 2012 Update and high school transcript collection. The model for the second follow-up simulation used the field test versions of variables used in the 2013 Update responsive design modeling as augmented by a subset of variables from the 2012 Update field test survey responses.

In order to determine if prioritizing cases in a responsive design framework is worth the effort, we need to address two questions:

Can the responding sample better represent the population of interest (i.e., fall 2009 9th graders as of 2016) by gaining participation from sample members whose characteristics differ from current respondents and who otherwise might not respond (sample representativeness)?

Are the interventions tested in the field test effective when targeting cases (intervention effectiveness)?

Sample representativeness. The field test sample size does not allow us to answer the questions definitively but the simulations allow us to test procedures and analyze the results as if the responsive design model was used. To address the first question, we produced estimates of sample allocation versus respondent allocation for certain variables that were part of the responsive design model. Exhibit B-4 shows a small number of example sample-representativeness measures. As can be seen, the respondent percentages at the end of data collection were closer to the overall sample percentages than respondents prior to phase 4, as represented by the given values shown in exhibit B-4. For example, Hispanics comprised 3.7 percent of the field test sample. Before phase 4, Hispanics represented only 2.8 percent of the responding sample, but the percentage grew to 3.5 by the end of data collection. As another example, students from high schools in the Midwest comprised 19.0 percent of the field test sample. The responding sample percentage was 22.9 percent prior to phase 4, 21.8 percent prior to phase 5, and 20.8 percent at the end of field test data collection.

The simulation included a handful of 2012 Update variables, however, those variables had considerable missingness due to 2012 Update unit nonresponse (imputation was not performed for the simulation). For each of the 2012 Update variables in the model, though, the final set of second follow-up field test respondents included more cases with unknown values for the 2012 Update variables than was the case prior to the start of phase 4. This meant that phases 4 and 5 brought in more cases that would otherwise have an adverse impact on potential nonresponse bias.

Exhibit B-4. Simulated impact of responsive design interventions on sample representativeness

Student/school indicator |

Percent among respondents before phase 4 |

Percent among respondents before phase 5 |

Percent among all field test respondents |

Percent among overall field test sample |

Male |

51.8 |

48.7 |

49.6 |

48.1 |

Asian |

5.1 |

4.6 |

4.0 |

4.1 |

Hispanic |

2.8 |

3.2 |

3.5 |

3.7 |

Suburban base-year school |

43.9 |

44.1 |

42.9 |

38.5 |

Town base-year school |

4.0 |

5.2 |

5.2 |

6.4 |

Rural base-year school |

15.4 |

16.1 |

17.1 |

19.7 |

Northeast base-year school |

15.4 |

17.8 |

18.3 |

20.7 |

Midwest base-year school |

22.9 |

21.8 |

20.8 |

19.0 |

Intervention effectiveness. In order to gauge a potential answer to the second question, we conducted the following analyses:

Estimate bias likelihood for the sample before the beginning of each of two interventions (phase 4: incentive boost amount comparison; and phase 5: comparison between abbreviated interview and added incentive boost).

We order the bias likelihood scores (model derived predicted probabilities) for the nonrespondents at the end of each of the pre-intervention phases and use the median to divide them into high and low priority cases.

Within the high priority cases (those above the median), we compare experimental conditions to see if the suggested interventions would be effective.

Responsive design simulation results. Exhibit B-5 shows the results of the phase 4 (incentive boost experiment) simulation with the targeted half-sample based on responsive design modeling immediately prior to phase 4 initiation. The results for the responsive design cases hint at the possible effectiveness of the incentive boost, though the numbers are too small for significance testing. Exhibit B-6 shows the results of the phase 5 (abbreviated interview vs. additional incentive boost experiment) simulation with the newly-identified targeted half-sample based on responsive design modeling immediately prior to phase 5 initiation. The results for the responsive design cases, again, hint at the greater effectiveness of the additional incentive boost as compared with the abbreviated interview offer, though the numbers are too small for significance testing. This simulation allowed us to test procedures in the field test and demonstrate that the field-tested interventions might be effective for targeted cases in the main study.

Exhibit B-5. Simulated effectiveness of responsive design interventions: incentive boost

Intervention |

Within phase response rate for all cases |

Within phase response rate for targeted half-sample of nonrespondents |

Total |

17.0 |

9.4 |

No incentive boost |

11.9 |

6.1 |

$15 incentive boost |

22.0 |

10.0 |

$30 incentive boost |

17.0 |

11.8 |

Exhibit B-6. Simulated effectiveness of responsive design interventions: abbreviated interview versus additional incentive boost

Intervention |

Within phase response rate for all cases |

Within phase response rate for targeted half-sample of nonrespondents |

Total |

14.2 |

7.1 |

Abbreviated interview |

10.4 |

4.1 |

$25 incentive boost |

17.9 |

10.2 |

B.4.d Main Study Plans

NCES and RTI are working closely together to design a data collection approach that makes use of evaluations from prior interventions that were used to improve sample representativeness by ensuring that the responding sample is as similar as possible to the total sample. In previous rounds of HSLS:09 and in other NCES studies (such as BPS:12/14, B&B:08/12, and ELS:2002 third follow-up), responsive designs have been used to improve sample representativeness in key survey variables. The proposed main study data collection plan has been designed to maximize data quality through a responsive design approach in which variance between the responding sample and the overall sample is estimated at several points during data collection. An advantage of the proposed responsive design is that it allows us to determine, during data collection, how representative the responding sample is of the total sample, so that we can focus efforts and resources on bringing in the cases that are most needed to achieve balance in the responding sample.

Plans for the HSLS:09 second follow-up main study are based upon 1) results of incentive experiments and responsive design modeling simulations from the HSLS:09 second follow-up field test, 2) results from related longitudinal studies, and 3) prior experience with the HSLS:09 cohort. This section describes plans for responsive design in the main study data collection. In particular, there are three subgroups of interest that will be handled differently. This section describes the phases of data collection and how and when interventions will be implemented and evaluated. Finally, we discuss the development of the response likelihood and bias likelihood models that will be used to identify cases for targeted treatments.

Sample subgroup classification. In the HSLS:09 second follow-up main study, there will be three subgroups of special interest.

Subgroup 1 (high school late/alternative/non-completers) will be the subset of sample members who, as of the 2013 Update, had not completed high school, were still enrolled in high school, received an alternative credential, completed high school late, or experienced a dropout episode with unknown completion status.

Subgroup 2 (ultra-cooperative respondents) includes sample members who participated in the base year, first follow-up, and 2013 Update without an incentive offer. These cases were also early web respondents in 2013 Update and, by definition, are high school completers.

Subgroup 3 (high school completers and unknown high school completion status) will include cases that, as of the 2013 Update, were known on-time or early regular diploma completers (and not identified as ultra-cooperative) and cases with unknown high school completion status who were not previously identified as ever having a dropout episode.

Calibration subsamples. To determine optimal incentive amounts, a calibration subsample will be selected from each of the aforementioned subgroups to begin data collection ahead of the main sample. A similar approach was used successfully in BPS:12/14, where approximately 10 percent of that sample (3,700 cases) was selected and fielded seven weeks prior to the rest of the BPS:12/14 sample. The experimental subsample was treated in advance of the remaining cases, and after analyzing the results for the experimental sample and consultation with OMB, the successful treatment was implemented with the remaining sample. In the HSLS:09 second follow-up main study, a similar approach is proposed with the HSLS:09 calibration subsamples fielded six weeks prior to the rest of the HSLS:09 sample. Exhibit B-7 shows the estimated size of each subgroup, the percentage of cases to be selected for the calibration subsample, and the estimated number of cases in the calibration sample.

Exhibit B-7. Calibration Sample Sizes, by Subgroup

Subgroup Number |

Subgroup Description |

Main Sample |

Calibration Sample |

Calibration Percent |

1 |

High School Late/Alternative/ Non-Completers Non-completers, late completers, still enrolled, and alternative credential as of the 2013 Update as well as ever dropouts with no completion status, |

2,545 |

509 |

20% |

2 |

Ultra-Cooperative Respondents High school completers who participated in base year and the first follow-up, and completed the 2013 Update in early web period, with no incentive |

1,027 |

154 |

15% |

3 |

All Other High School Completers and Unknown Cases HS Diploma completed early/on-time unknown or unknown completion status with no known dropout episode |

19,747 |

1,975 |

10% |

Data collection phases, treatments, and evaluations. For the second follow-up main study, the data collection plan includes a phased responsive design strategy specifically aimed at improving sample representativeness in the final survey participants. Exhibit B-8 presents the schedule for the planned phases of data collection for both the calibration samples and the main samples. Exhibit B-9 summarizes the baseline and boost incentives to be tested for each subgroup. The phases will proceed as follows:

Baseline incentive (phase 1). During this beginning phase of data collection, the survey will be open exclusively for self-administered interviews via the web. Web response will remain open throughout the entire data collection. As described above, the calibration samples will allow for testing of incentive amounts on a subset of cases, and the results will inform the implementation plan for the main samples. Prior to the start of the main sample data collection for phase 1, calibration sample response rates will be evaluated. An ANOVA-based model will be used to perform pairwise contrasts between the different incentive amounts offered to the treatment and control groups in each phase. NCES and OMB will meet to review the results of the calibration experiment and determine the optimal incentive amount for each of the subgroups.

Subgroup 1 (high school late/alternative/non-completers) will be offered 3 different baseline incentive amounts ($30, $40, or $50). The optimal amount (to be determined in consultation with OMB) will be offered to all cases in the subgroup 1 main sample.

Subgroup 2 (ultra-cooperative respondents) will not be offered a baseline incentive. The subgroup 2 calibration sample response rate will be evaluated against early response rates for other cohorts (such as BPS:12/14 and ELS:2002 third follow-up) to estimate a “successful” response benchmark for HSLS:09. If it is determined that the subgroup 2 calibration sample response rate is not successful, we will discuss with OMB the possibility of offering a baseline incentive (amount to be determined in consultation with OMB) to the subgroup 2 main sample.

Subgroup 3 (high school completers and unknowns) will be offered 6 different incentive amounts, ranging from $15 to $40 ($15, $20, $25, $30, $35, or $40). The $15 starting point for this baseline incentive calibration experiment is based on the results of the HSLS:09 second follow-up field test experiment. The optimal amount (to be determined in consultation with OMB) will be offered to all cases in the subgroup 3 main sample.

Exhibit B-8. Data Collection Schedule and Phases.

Outbound CATI prompting (phase 2). After phase 1 data collection which is self-administered via the web (except for instances when sample members call in to the help desk), phase 2 will initiate another mode of data collection. Telephone interviewers will begin making outbound calls to prompt for self-administration or to conduct telephone interviews. No additional incentives will be offered during phase 2.

Subgroup 1 will begin outbound CATI earlier than the other subgroups, to allow additional time for telephone interviewers to work these high priority cases.

Incentive boosts (phases 3 and 4). Phases 3 and 4 introduce the use of responsive design with the bias likelihood model. Targeted cases will be offered an incentive boost in addition to the baseline incentive offer. The calibration samples will allow for testing of incentive boost amounts on a subset of the remaining nonrespondents in phases 3 and 4, and the results will inform the incentive boost implementation plan for the main samples. Prior to the start of the main sample data collection for phases 3 and 4, calibration sample response rates will be evaluated. An ANOVA-based model will be used to perform pairwise contrasts between the different incentive boost amounts offered to the treatment and control groups in each phase. NCES and OMB will meet to review the results of the calibration experiment and determine the optimal incentive boost amount for each of the subgroups.

Subgroup 1 (high school late/alternative/non-completers) will be offered an incentive boost of either $15 or $25, on top of the baseline incentive they were offered in phase 1. The optimal amount (to be determined in consultation with OMB) based on the calibration sample results, will be offered to all remaining nonrespondents in subgroup 1.

The subset of subgroup 2 (ultra-cooperative respondents) cases that are targeted for intervention, based on bias likelihood modeling, will be offered an incentive boost of either $10 or $20, and the optimal amount (to be determined in consultation with OMB) will be offered only to targeted cases among the remaining subgroup 2 nonrespondents.

The subset of subgroup 3 (high school completers and unknowns) cases that are targeted for intervention, based on bias likelihood modeling, will be offered an incentive boost of either $10 or $20, and the optimal amount (to be determined in consultation with OMB) will be offered only to targeted cases among the remaining subgroup 3 nonrespondents.

Exhibit B-9. Main study baseline and incentive boost experiments

|

Incentive Phase |

Amount |

Total Cumulative Incentives Offered |

Estimated Number of Cases to be Worked |

High School Late/Alternative/Non-Completers |

Base Incentive |

$30 |

$30 to $50 |

170 |

(all calibration sample cases) |

$40 |

170 |

||

|

$50 |

169 |

||

Boost 1 (all remaining calibration sample nonrespondents) |

$15 |

$45 to $75 |

158 |

|

$25 |

158 |

|||

Boost 2 (all remaining calibration sample nonrespondents) |

$10 |

$55 to $95 |

102 |

|

$20 |

102 |

|||

Ultra-Cooperative Respondents |

Base Incentive (all calibration sample cases) |

$0 |

$0 |

154 |

|

||||

Boost 1 (for targeted cases only: combined with subsample 3) |

$10 |

$10 to $20 targeted; $0 otherwise |

(very few if any cases expected to be selected) |

|

$20 |

||||

Boost 2 (for targeted cases only: combined with subsample 3) |

$10 |

$10 to $40 targeted; $0 to $20 otherwise |

(very few if any cases expected to be selected) |

|

$20 |

||||

High School Completers and Unknowns |

Base Incentive |

$15 |

$15 to $40 |

330 |

(all calibration sample cases) |

$20 |

329 |

||

|

$25 |

329 |

||

|

$30 |

329 |

||

|

$35 |

329 |

||

|

$40 |

329 |

||

Boost 1 (for targeted cases: 1/2 of non-respondents) |

$10 |

$25 to $60 targeted; $15 to $40 otherwise |

250 |

|

$20 |

250 |

|||

Boost 2 (for targeted cases: 1/2 of non-respondents) |

$10 |

$25 to $80 targeted; $15 to $60 otherwise |

175 |

|

$20 |

175 |

Additional treatments for targeted cases. In addition to the monetary interventions described above, the HSLS:09 second follow-up main study design includes non-monetary treatments to be used with targeted cases.

Field interviewing (phase 5). Field interviewing will be conducted for all targeted nonrespondents at the same time; there will be no time lag between the calibration and main samples. Cases identified for targeted treatment (all high school late/alternative/non-completers, and sample members with high bias likelihood scores) will be considered for field interviewing. The decision to conduct field interviewing for a case may also be determined by other factors, such as the location of a case and its proximity to other likely field cases. Nontargeted cases may potentially be included in field interviewing if it is cost effective to do so. Conversely, given the expense of field interviewing, cases with a very low response likelihood may not be pursued.

Extended data collection (phase 6). Cases identified for targeted treatment (all high school late/alternative/non-completers, and sample members with high bias likelihood scores) will be part of an extended data collection period. During this period (the last month of data collection), only targeted cases will be actively prompted to participate. Data collection will remain open for all other cases if they choose to participate, but effort to pursue those cases will be suspended.

Model development. A critical element of any responsive design is the method used to identify cases that will receive special treatment. As described above, the primary goal of this approach is to improve sample representativeness. The bias likelihood model will help determine which cases are most needed to balance the responding sample, and the response likelihood model will help determine which cases may not be optimal for pursuing with targeted interventions so that project resources can be most effectively allocated. In this section, we describe our modeling approach and the variables to be considered for use as predictor variables for both the bias likelihood and the response likelihood models. Variables will be drawn from data obtained in prior waves of data collection with this cohort (base-year, first follow-up, and 2013 Update survey data; high school transcripts; school characteristics; sampling frame information; and paradata). The models for the HSLS:09 second follow-up main study have been developed and will be refined from models for previous rounds of HSLS:09, ELS:2002, and other NCES studies, including BPS:12/14.

Response Likelihood Model. The response likelihood model will be run only once, before data collection begins. Using data obtained in prior waves that are correlated with response outcome (primarily paradata variables), we will fit a model predicting response outcome in the 2013 Update. We will then use the coefficients associated with the significant predictors to estimate the likelihood of response in the second follow-up main study, and each sample member will be assigned a likelihood score prior to the start of data collection. Exhibit B-10 lists the universe of predictor variables that will be considered for the response likelihood model.

During data collection, the response likelihood scores will be used as a “filter” to assist in determining intervention resource allocation. For example, cases that have a very high likelihood of participation may not be offered an incentive boost, since they are likely to participate without it. The response likelihood score can also be used to exclude cases with very low likelihood from the field interviewing intervention. We will also consider using the response likelihood score to adjust the classification of cases in the subgroups. For example, cases with very high response likelihood scores could potentially be treated as “ultra-cooperative” cases. The primary objective of the response likelihood model is to provide information that will inform decisions about inclusion or exclusion of targeted cases for interventions, thereby controlling costs.

Bias Likelihood Model. The bias likelihood model will be used to identify cases that are most unlike the set of sample members that have responded. As was done in the responsive design approach for the 2013 Update, the bias likelihood model will use only key survey and frame variables as predictors to identify nonrespondents most likely to reduce bias in key survey variables if converted to respondents. To calculate bias likelihood, we will run a logistic regression with the second follow-up response outcome as the dependent variable. The bias likelihood model will be run at the beginning of phases 3, 4, 5, and 6 for the calibration samples and at the beginning of phases 3, 4, 5, and 6 for the rest of the cases. (Modeling will be done on the combined sample [calibration cases and rest of cases] prior to phases 5 and 6.) We will then use the coefficients associated with the significant predictors to assign a bias likelihood score for each case. Because the set of respondents and nonrespondents is dynamic, the bias likelihood score for an individual case may change across the phases. The universe of candidate predictor variables have been selected due to their analytic importance for the study, and are presented in Exhibit B-11.

Exhibit B-10. Candidate Variables for the Main Study Response Likelihood Model

Data collection wave |

Variables |

Base year |

Response outcome Response mode Early phase response status |

First follow-up |